Remote PPG (rPPG): The Definitive 2026 Guide to Contactless Vital Signs Monitoring

Your face tells your heart rate. Not metaphorically. Physically. With each beat, your heart pushes a small bolus of oxygenated blood into the capillar...

Your face tells your heart rate. Not metaphorically. Physically. With each beat, your heart pushes a small bolus of oxygenated blood into the capillaries just beneath your skin. That blood is redder than what came before it. A camera sensitive enough to catch the difference, combined with software that knows what to look for, can extract your pulse without touching you.

That is the premise of remote photoplethysmography (rPPG), and it has spent roughly 18 years moving from a curious optical demonstration into a commercially deployed medical technology with FDA clearances, venture-backed companies, and adoption across healthcare, insurance, automotive, and enterprise wellness.

This guide covers the science, the algorithms, the equity problems, the regulatory timeline, and the commercial players building on top of rPPG in 2026. It is written for engineers, clinicians, product managers, and researchers who want more than a summary.

1. What is rPPG? Foundation and history

Photoplethysmography has been a clinical tool since at least the 1930s. A pulse oximeter, the device clipped to your finger in every hospital bed, uses PPG: it shines red and infrared light through a fingertip and measures how much is absorbed. The ratio of absorbed wavelengths tells you blood oxygen saturation; the timing of the absorption peaks gives you heart rate.

Contact PPG requires physical proximity to skin. For decades, that was simply assumed.

The insight that changed things: you do not need to shine your own light. Reflected ambient light, off a human face, contains the same pulsatile signal. It is faint, buried in noise, and varies with head movement and lighting conditions, but it is there.

Verkruysse, Svaasand, and Nelson published the first rigorous demonstration in 2008 (DOI: 10.1364/OE.16.021434). Using a consumer digital camera and ordinary office lighting, they extracted the cardiac pulse, respiratory rate, and the relative contributions of blood oxygenation from video of a human face. The paper is short, the methods are straightforward, and it opened a research direction that has not stopped growing since.

The 2008 paper had one immediate implication: you already own the sensor. Any smartphone, laptop webcam, or security camera is, in principle, an rPPG device. The hardware cost is zero. The barrier is entirely in the software.

Why the face?

The forehead and cheeks have dense capillary beds close to the skin surface. They are also usually exposed. Most rPPG research has focused on the face for these reasons, but the signal exists wherever there is skin and sufficient lighting. Recent work published in ScienceDirect (2025) explored neck-focused rPPG using the PyVHR framework, finding the neck provides a viable alternative signal source, particularly useful when the face is partially obscured.

The green channel of an RGB camera carries the strongest pulsatile signal because hemoglobin absorption peaks near 540-580 nm, in the green portion of the visible spectrum. Early algorithms worked almost entirely in the green channel. Later work, particularly the chrominance-based methods developed through the 2010s, showed that using the relationship between color channels (rather than any single channel) dramatically reduces sensitivity to illumination changes.

The first decade of rPPG research (2008-2018)

After the Verkruysse et al. paper, the field spent roughly a decade refining classical signal processing approaches. Researchers developed increasingly sophisticated color space projections, motion compensation techniques, and frequency-domain estimation methods. The CHROM and POS algorithms emerged as the standard baselines. By 2017, the best classical methods achieved mean absolute error (MAE) of around 3-5 BPM under controlled conditions, with significant degradation under natural motion.

That accuracy plateau, combined with the rise of deep learning across computer vision, set up the next phase.

2. How rPPG works: The technical pipeline

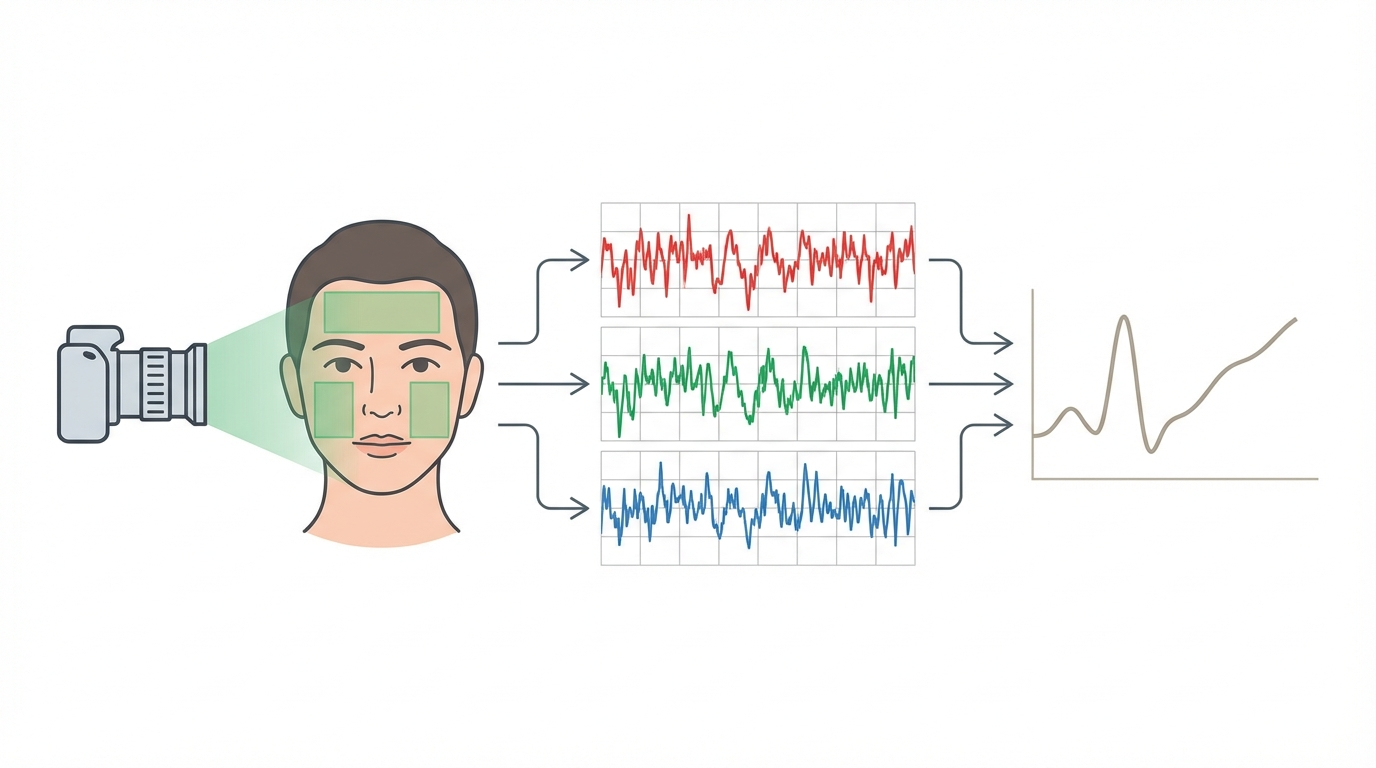

An rPPG system typically runs through five stages: face detection, region of interest selection, signal extraction, noise filtering, and vital sign estimation.

Face detection and tracking

Before any signal processing begins, the system needs to find the face in the frame and track it across time. Standard object detection and landmark tracking pipelines handle this. The challenge is that face tracking errors, where the bounding box drifts or loses lock, propagate directly into signal corruption. Robust rPPG depends on robust face tracking.

Modern implementations typically use lightweight landmark models that can locate 68-468 facial keypoints in real time, enabling precise region-of-interest masking rather than simple bounding box crops.

Region of interest selection

Not all pixels are equally useful. Skin pixels over bony areas (temples, forehead) tend to have stronger signal-to-noise ratios than skin over muscle or areas close to hair. Early systems used the entire face bounding box. More careful implementations identify and exclude non-skin regions: eyes, mouth, hair, and background.

The choice of region matters more than it might seem. A July 2025 Nature Digital Medicine study examined which face regions contribute most to heart rate accuracy in contactless monitoring, finding that the forehead and cheeks provided consistently stronger signal than the perioral or periorbital regions, particularly under motion.

RGB to rPPG signal extraction

Given a sequence of skin pixel values over time, the next step is to extract the underlying pulsatile signal. The raw green channel intensity fluctuates, but so does it in response to lighting changes, head rotation, and compression artifacts. Several algorithmic approaches have been developed to isolate the cardiac component:

GREEN (baseline): Uses only the mean green channel intensity. Fast but susceptible to illumination variation.

CHROM (Haan and Jeanne, 2013): Works in the chrominance space, subtracting combinations of color channels to cancel specular reflections and illumination changes. Substantially more robust than single-channel methods.

POS (de Haan and Jeanne, 2013, refined by Wang et al., 2017): Projects the RGB signal onto a plane orthogonal to the skin tone direction in color space, suppressing motion-related color changes. POS is widely used as a baseline in academic benchmarking.

ICA (Independent Component Analysis): Decomposes the multi-channel signal into statistically independent components, one of which corresponds to the cardiac pulse.

These classical methods work reasonably well under controlled conditions. They degrade under motion and variable lighting. Deep learning replaced them for high-accuracy applications.

Frequency domain estimation

Once the rPPG waveform is extracted, heart rate is typically estimated by finding the dominant frequency in the cardiac band (0.7-4 Hz, corresponding to 42-240 BPM). Short-time Fourier transform or peak-picking in the frequency domain gives a heart rate estimate. For heart rate variability (HRV), inter-beat intervals are needed, which requires either time-domain peak detection or more sophisticated inverse methods.

Respiratory rate

Respiratory rate is encoded in the rPPG signal in two ways: as low-frequency intensity modulation from chest movement when the camera has a chest view, and as baseline wander in the facial rPPG signal caused by respiratory-driven blood volume changes. Frequency analysis in the 0.1-0.5 Hz range (6-30 breaths per minute) extracts the respiratory component.

What the signal actually looks like

A raw rPPG trace looks superficially like an ECG or a contact PPG trace: a periodic waveform with peaks corresponding to cardiac cycles. The key differences are signal-to-noise ratio (rPPG SNR is far lower), amplitude (the cardiac component is typically 0.1-0.5% of the total intensity), and sensitivity to confounders. Every motion, every lighting change, every facial expression rides on top of the signal the algorithm is trying to find.

3. The deep learning revolution in rPPG

Classical signal processing methods for rPPG hit an accuracy ceiling around 2017. They could achieve reasonable results in controlled lab settings but degraded badly with natural head movement, variable lighting, and facial expressions. Deep learning changed that, and changed it fast.

DeepPhys (Chen and McDuff, 2018)

DeepPhys was the first end-to-end deep learning approach for rPPG. Rather than handcrafting color space projections, DeepPhys learned directly from raw video frames what signal to extract. The architecture used an attention mechanism to weight skin pixels more heavily than background regions. The performance gap over classical methods was immediate and large.

What made DeepPhys significant was not just the accuracy numbers but the framing: instead of asking "how do we better separate the cardiac signal from noise?" it asked "can we train a network to predict the physiological signal directly from video?" That shift opened the field to the full toolbox of deep learning research.

PhysNet (Yu et al., 2019)

Yu et al. introduced PhysNet using a 3D convolutional neural network (3D-CNN) that processed spatiotemporal video volumes rather than frame-by-frame analysis. 3D convolutions can capture motion patterns as features, giving the network a natural way to model and separate cardiac pulsation from head movement artifacts. PhysNet set a new accuracy benchmark on UBFC-rPPG and became a standard comparison point.

TS-CAN (NeurIPS 2020)

The Temporal Shift Channel Attention Network combined two ideas that had each shown promise separately. Temporal shift operations (borrowed from video understanding research) enable efficient temporal modeling without the computational cost of 3D convolutions. Channel attention reweights feature maps to focus on physiologically meaningful color responses.

On the UBFC-rPPG benchmark, TS-CAN achieved MAE as low as 0.98 BPM with a Pearson correlation of 0.99 against ECG ground truth. Those numbers match or exceed contact-based consumer wearables under controlled conditions. The NeurIPS venue also brought rPPG research to the attention of the broader machine learning community, not just the biosensing niche.

EfficientPhys (Liu et al., 2023)

On-device inference is a hard constraint for most commercial applications. You cannot stream high-resolution video to a cloud server with acceptable latency and reasonable cost. Liu et al. addressed this with EfficientPhys, redesigning the architecture for real-time performance on mobile hardware. EfficientPhys achieves accuracy comparable to heavier models while reducing computational cost enough for smartphone deployment.

This is where research and product development start to align. A model that works only on a GPU server is not a product for most use cases. EfficientPhys represented the field beginning to take deployment constraints seriously.

PhysFormer: Transformer-based rPPG

The transformer architecture's ability to model long-range temporal dependencies makes it attractive for rPPG, where the cardiac cycle spans several seconds and the algorithm needs to distinguish periodic cardiac patterns from aperiodic motion. PhysFormer applied self-attention mechanisms across video frame sequences. The approach demonstrated that transformers can learn which temporal patterns correspond to cardiac pulsation without being explicitly told the cardiac band frequency range, learning it from data instead.

rPPG-Toolbox (NeurIPS 2023)

Reproducibility in rPPG research had been a persistent problem. Different papers used different preprocessing, different evaluation splits, and different metrics, making direct comparison unreliable. The UbiComp Lab at the University of Washington released rPPG-Toolbox at NeurIPS 2023, a comprehensive benchmarking suite covering multiple models, datasets, and evaluation protocols.

rPPG-Toolbox is now the standard reference for comparing architectures. Its existence matters as much as any individual model result, because it transforms a fragmented literature into a coherent evidence base. Regulators and product teams alike benefit from a field where results are reproducible.

2024-2025: What the recent literature shows

The Sakib "State-of-the-Art Survey" (WIREs Data Mining, August 2025) provides a comprehensive taxonomy of methods from the 2008 origins through current transformer and multimodal approaches, covering both algorithm classes and dataset characteristics. It is the most complete single reference for the field as of publication.

PMC12181896 (2025) reviews heart rate measurement through the combination of rPPG and deep learning, with a focus on clinical applicability: what accuracy is needed for which clinical contexts, and where current methods still fall short.

A December 2025 Nature Digital Medicine study examined rPPG reliability under low illumination and elevated heart rates. Under office lighting at 100 lux, modern deep learning models maintained accuracy. Below 50 lux, most models showed significant degradation. Elevated heart rates above 140 BPM showed accuracy declines across all tested architectures. Both conditions are common in real clinical settings, which makes these findings practically important.

A July 2025 Nature Digital Medicine study investigated the role of face regions in contactless heart rate monitoring. The forehead showed the most consistent signal-to-noise ratio across subjects. The cheeks performed well in most subjects but showed higher variance. Periorbital regions underperformed, particularly with glasses wearers.

An MDPI Electronics paper from March 2025 titled "Advancements in Remote Photoplethysmography" reviewed the state of the field across algorithm classes, hardware considerations, and application domains. The Frontiers in Digital Health rPPG review from December 2025, informed by IntelliProve's real-world deployment data, provided one of the more grounded assessments of performance outside laboratory conditions.

A multimodal approach published in MDPI Sensors (2024), combining rPPG with other features using a random forest ensemble, achieved MAE of 3.057 BPM. This demonstrates that non-deep-learning approaches remain competitive for specific deployment constraints, particularly when computational resources are extremely limited.

Benchmark datasets

UBFC-rPPG: 42 subjects, indoor lighting, minimal motion. The most widely used benchmark. Strong results here do not guarantee real-world performance because it is too clean.

PURE: 10 subjects, six motion types including translation and rotation. Tests motion robustness more directly than UBFC-rPPG.

COHFACE: 160 videos with controlled illumination variations.

SCAMPS: 2,800 synthetic subjects, useful for pretraining given its scale.

UCLA-rPPG: Specifically designed for skin tone diversity evaluation, with subjects across the Fitzpatrick scale.

A model achieving MAE under 1 BPM on UBFC-rPPG and under 3 BPM on PURE is generally considered strong for 2025 standards. Real-world deployment typically sees MAE of 3-8 BPM depending on conditions.

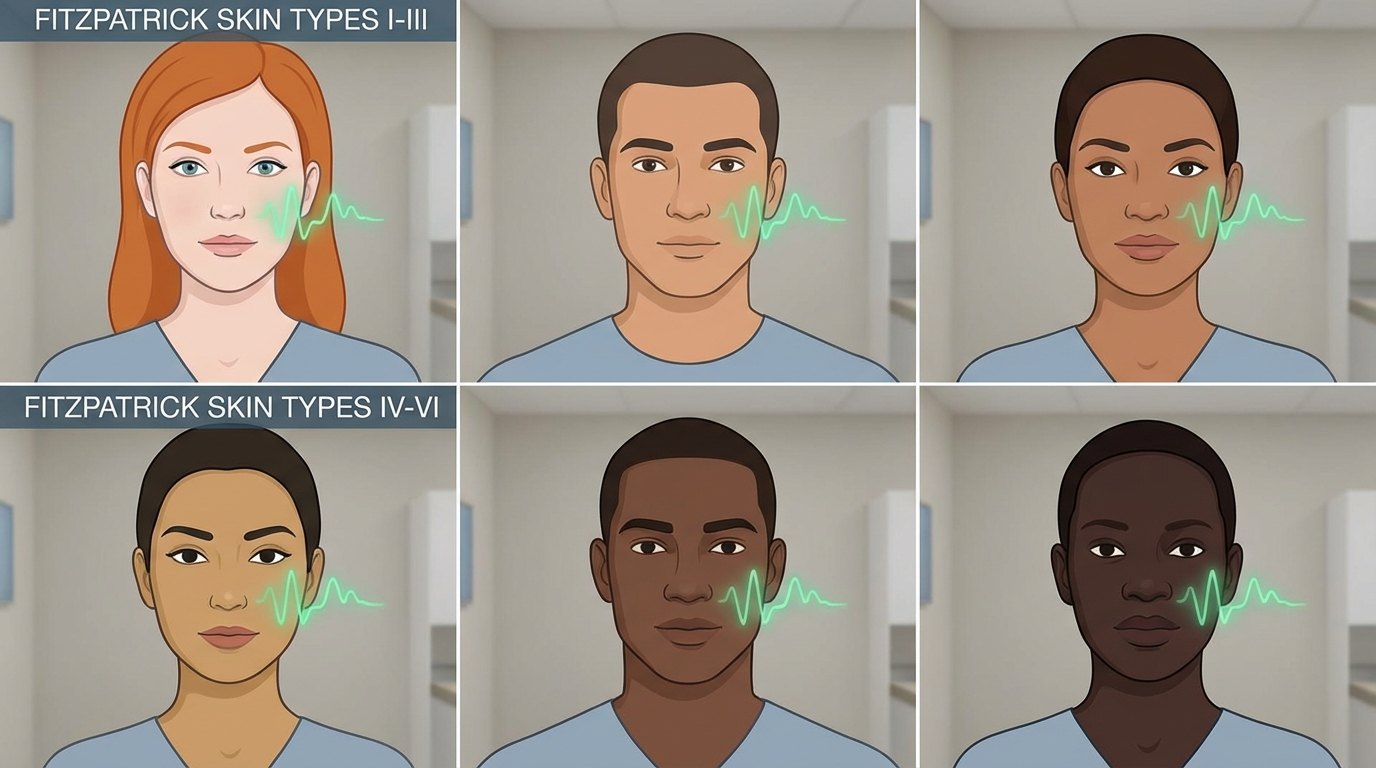

4. Skin tone equity and bias

This is the part of rPPG research that deserves more attention than it typically gets in product announcements.

Melanin, the pigment that determines skin tone, absorbs visible light. More melanin means more absorbed light, which means less reflected light reaching the camera. Because rPPG depends on detecting tiny reflectance changes (0.1-0.5% of the total signal), a lower baseline reflectance directly reduces signal-to-noise ratio. The effect is not subtle.

Nowara et al. (2020) quantified this directly: rPPG accuracy degraded by 30-50% across darker Fitzpatrick skin types compared to lighter ones. This was not a minor variation. It meant that for a significant portion of the global population, rPPG systems validated in lab studies would perform substantially worse in deployment.

The sources of bias are multiple. First, the physics: visible-light reflectance decreases with melanin content. Second, the datasets: UBFC-rPPG and most early training sets were heavily skewed toward lighter skin tones, which means deep learning models trained on them learned features that work well for that distribution and generalize poorly. Third, the evaluation metrics: mean absolute error across a dataset averages over all subjects, which can mask poor performance on subgroups if those subgroups are small enough.

Approaches to address the bias

Near-infrared (NIR) imaging: Melanin absorbs less at NIR wavelengths than in visible light. NIR cameras can reduce the skin tone gap significantly. The tradeoff is hardware cost and the fact that standard cameras, which are the deployment target for most commercial rPPG, capture little NIR.

Chrominance-based methods (CHROM, POS): By working in color ratios rather than absolute intensities, these methods are less sensitive to the absolute reflectance level. They partially, but not fully, address the skin tone issue.

Diverse training data: Models trained on datasets like UCLA-rPPG, which explicitly includes diverse skin tones, show reduced bias compared to those trained only on UBFC-rPPG. Dataset curation is probably the highest-leverage intervention available to practitioners right now.

Adaptive preprocessing: Some implementations apply tone-specific normalization or adaptive filtering calibrated to the estimated Fitzpatrick type of each subject. The evidence for this approach is mixed.

Shen.AI has published specific numbers here: their Multi-Tonal Sensing Technology, developed across a dataset of over 5,000 participants, improved SDNN (a key HRV metric) accuracy by 18% for darker skin tones compared to their previous approach. That is a meaningful improvement, and it came from deliberate dataset and algorithm investment.

The broader industry needs more of this. An rPPG product that is FDA-cleared for heart rate measurement but performs materially worse for darker-skinned patients is not a neutral tool. Bias documentation should be a standard part of any regulatory submission and product specification. The FDA will likely require it for future clearances, as they have increasingly required demographic subgroup analysis for other SaMD.

5. Vital signs beyond heart rate

Heart rate is the easiest target for rPPG and the first to achieve regulatory clearance. The ambition of the field extends further.

Respiratory rate

Breathing modulates the facial blood volume signal in two ways: direct coupling through cardiovascular-respiratory interaction, and mechanical coupling from chest and neck movement. Respiratory rate extraction from rPPG is conceptually straightforward and has reached clinical-grade accuracy in controlled conditions. FaceHeart received FDA 510(k) clearance for respiratory rate in April 2025 (k243966), and Mindset Medical followed with their second clearance in June 2025. These clearances validate that the evidence base for rPPG-derived respiratory rate meets the threshold for Class II medical device classification.

Heart rate variability (HRV)

HRV measures the variation in time between successive heartbeats. It is used clinically as an indicator of autonomic nervous system function, stress response, and cardiac health. Extracting accurate inter-beat intervals from an rPPG signal requires significantly higher temporal resolution and signal quality than extracting mean heart rate. The signal-to-noise requirements are tighter, motion artifacts cause larger relative errors, and video compression (which averages intensity values across frames) can directly destroy the millisecond-level timing needed for HRV metrics like SDNN and RMSSD.

State-of-the-art approaches achieve reasonable HRV correlation under controlled conditions. In free-living settings, results are more variable. No FDA clearance for camera-based HRV exists as of early 2026.

Blood pressure

Cuffless blood pressure estimation from rPPG is one of the most commercially attractive targets and one of the most contested claims in the field. The physiological mechanisms that could enable it include pulse transit time (PTT, the time for a pulse to travel between two arterial points), the shape of the PPG waveform, and machine learning models trained to correlate waveform features with blood pressure readings.

The accuracy claims for camera-based blood pressure are inconsistent in the literature. A June 2025 JMIR Formative Research study conducted at Singapore General Hospital evaluated rPPG for blood pressure and hemoglobin estimation in a clinical population, representing one of the more rigorous real-world evaluations. NuraLogix and Binah.ai both claim blood pressure estimation capability, but neither has FDA clearance for it, and the FDA has been consistent: camera-based blood pressure remains in the investigational category.

SpO2 (blood oxygen saturation)

SpO2 from a camera requires capturing at least two wavelengths with different hemoglobin absorption characteristics, analogous to a pulse oximeter. Most smartphone cameras can do this in principle, but the signal is extremely weak and highly susceptible to skin tone variation because melanin absorbs across the visible spectrum. Claims of camera-based SpO2 accuracy need careful scrutiny. No FDA clearance exists for this indication as of early 2026.

Stress and metabolic biomarkers

NuraLogix's Anura platform claims stress levels, diabetes risk scores, and blood biomarkers from a 30-second face scan. The physiological plausibility varies: stress has known cardiovascular correlates (heart rate, HRV, vasomotor tone) that rPPG could detect indirectly. Diabetes risk scores and blood biomarker predictions from camera video are a different claim class, relying on machine learning correlations that are not yet supported by clear mechanistic pathways or peer-reviewed validation at clinical-grade accuracy. In the US, these features carry an "Investigational Use Only" label.

6. Commercial landscape: Who's building rPPG in 2026

Eight companies represent the current spectrum of commercial rPPG, ranging from regulatory-cleared consumer apps to B2B SDKs to IP licensing programs.

Binah.ai (Israel)

Binah.ai is a software-only health data platform built around rPPG. Their SDK, now at version 5.11 (released September 2025), enables 35-second vital sign spot checks via smartphone or webcam. They raised a $13.5M Series B from Maverick Ventures Israel and have focused on enterprise deployment: insurance carriers using it for underwriting data collection, healthcare systems for remote triage, and corporate wellness programs.

Binah.ai expanded their platform in August 2023 to support wrist PPG sensors in addition to camera-based measurement, positioning as an agnostic health data aggregation layer rather than a purely camera technology. A partnership with Endava added cuffless blood pressure estimation via smartphone. They also added fall detection to the platform, broadening beyond rPPG-native use cases. Binah.ai does not hold FDA 510(k) clearance for their rPPG functionality.

Shen.AI

Shen.AI's SDK combines rPPG with remote ballistocardiography (rBCG), which detects the mechanical forces from the heartbeat as captured by the camera. Their headline differentiator is the Multi-Tonal Sensing Technology, addressing the skin tone accuracy gap directly. Across a validation dataset of over 5,000 participants, they report an 18% improvement in SDNN accuracy for darker skin tones compared to their earlier approach.

The measurement set includes blood pressure, heart rate, respiratory rate, SpO2, and HRV, with a 50-second scan time. The SDK targets telehealth platforms, insurance onboarding, and enterprise wellness. Both mobile and desktop deployments are supported. Shen.AI does not hold FDA 510(k) clearance as of early 2026.

NuraLogix (Toronto, Canada)

NuraLogix holds a patent on what they call Transdermal Optical Imaging (TOI), a specific approach to extracting cardiovascular information from video of the face. Their consumer product, Anura, has been deployed for several years. Their enterprise product, Anura MagicMirror, released a next-generation version in March 2025 with 4G connectivity support, targeting retail health kiosks and pharmacy deployments.

The 30-second Anura scan claims heart rate, blood pressure, SpO2, HRV, stress levels, diabetes risk, and blood biomarker assessments. The metabolic and biomarker features were expanded in July 2025. In the United States, NuraLogix's blood pressure and metabolic features carry an "Investigational Use Only" designation. The company has a stronger commercial presence in Asia, where regulatory pathways for some of these features have been more accessible. No FDA clearance for any of these indications has been announced.

PanopticAI (Hong Kong)

PanopticAI received FDA 510(k) clearance in January 2025 for their contactless pulse rate measurement application, making them one of the first companies globally to clear this regulatory hurdle. The company is an HKSTP (Hong Kong Science and Technology Parks) incubatee. Their clearance establishes them as a medical device company and gives them a regulatory credential that differentiates them from the many rPPG products operating in wellness or research contexts.

Mindset Medical (Arizona)

Mindset Medical's Informed Vital Core (IVC) app has accumulated two FDA 510(k) clearances in under a year: pulse rate cleared in November 2024, followed by respiratory rate cleared in June 2025. This regulatory progress, combined with a Series A financing round in June 2024, positions Mindset Medical as one of the most aggressively regulatory-focused players in the US market.

Two clearances in such a short window suggest the company built their regulatory submission strategy carefully, with each indication supported by strong clinical evidence. The pulse rate clearance likely provided a validated technical foundation that made the respiratory rate submission faster.

FaceHeart (Taiwan)

FaceHeart's FH Vitals SDK combines computer vision, rPPG, and deep learning to measure heart rate, respiratory rate, blood pressure, SpO2, and HRV in a 50-second scan. Their FDA 510(k) for heart rate was followed by a second clearance for respiratory rate in April 2025 (k243966, classified as Class II Software as a Medical Device).

The Taiwan-based team has demonstrated that non-US companies can navigate the FDA 510(k) pathway successfully. Their dual clearances put them in the same small group as Mindset Medical as companies with multi-indication regulatory validation in the US.

Philips (Netherlands)

Philips runs an IP licensing program for what they call "Biosensing" technology based on rPPG, covering heart rate and breathing rate extraction using both standard visible-light cameras and infrared cameras. Rather than fielding their own consumer product, Philips licenses the technology to OEMs, giving them revenue from the rPPG ecosystem without the cost of product development and go-to-market.

The Philips patent portfolio in rPPG is extensive, built over more than a decade of research. Any company building a camera-based vital signs product needs to assess Philips IP exposure before shipping. The licensing program means Philips participates in the commercial success of the field without the regulatory and distribution overhead.

IntelliProve (Belgium)

IntelliProve is a Belgian rPPG health technology company with a research profile that extends to published academic work. A December 2025 Frontiers in Digital Health review of rPPG was informed by IntelliProve technology and real-world deployment data. Their positioning is in B2B telehealth and occupational health, with actual clinical deployments rather than just SDK sales. The Frontiers publication represents one of the more transparent disclosures of real-world rPPG performance data in 2025, distinguishing IntelliProve from vendors whose accuracy claims are based entirely on controlled-environment studies.

7. FDA 510(k) clearances: The regulatory milestone tracker

Before 2024, no rPPG product had FDA 510(k) clearance. The regulatory category did not exist as a clearly established predicate device class, and companies operating in wellness contexts could avoid the 510(k) pathway by staying out of medical claims. That changed fast.

What 510(k) clearance means

A 510(k) clearance is not the same as FDA approval. The 510(k) pathway allows a device to be marketed if it can demonstrate substantial equivalence to a previously cleared predicate device. For Class II Software as a Medical Device (SaMD), this means demonstrating that the software performs its claimed function within acceptable accuracy tolerances, with a defined intended use, defined patient population, and defined performance testing protocol.

Clearance for a specific indication does not extend to other indications. A company cleared for pulse rate cannot market their product as measuring blood pressure without a separate clearance. This is why the cleared indications are so narrowly defined and why you should read the clearance letter, not just the product description.

The clearance timeline

November 2024: Mindset Medical IVC, pulse rate. This was the first FDA 510(k) clearance for a camera-based rPPG pulse rate application. It established the predicate and set a template for subsequent submissions.

January 2025: PanopticAI, contactless pulse rate. PanopticAI followed with their own pulse rate clearance, demonstrating that the 510(k) pathway Mindset Medical had established was repeatable.

April 9, 2025: FaceHeart FH Vitals SDK, respiratory rate (k243966). The first clearance for respiratory rate via camera. This extended the cleared indication set beyond pulse rate for the first time.

June 2025: Mindset Medical IVC, respiratory rate (second clearance). Mindset Medical extended their cleared indication set to include respiratory rate, giving them the broadest regulatory coverage in the US among camera-only rPPG companies.

What remains uncleared

Blood pressure, blood oxygen saturation (SpO2), HRV, stress, and metabolic biomarkers all remain outside the cleared indication set as of early 2026. Companies can measure and display these values in wellness contexts, but they cannot market them as medical devices without clearance, and they cannot bill for them in clinical contexts.

The FDA's consistent position is that the evidence base for camera-derived blood pressure does not yet meet the threshold for 510(k) clearance. The accuracy variability, the skin tone bias, and the lack of a well-understood physical mechanism comparable to pulse transit time for wrist-based cuffless blood pressure all contribute to this.

What the clearance wave signals

Four clearances in eight months means the research-to-regulatory pipeline is working. The academic literature has matured enough that companies can build rigorous clinical validation studies. The predicate device framework has been established. Subsequent clearances will be faster and cheaper than the first ones.

The next likely regulatory expansions are HRV (closest to pulse rate in technical requirements) and possibly SpO2 in controlled clinical populations. Blood pressure clearance is further away and will require large, demographically diverse clinical studies with results stratified by skin tone.

8. Use cases and verticals

Telehealth

The most direct application. A patient on a video call can have their heart rate and respiratory rate measured without any hardware beyond their existing camera. During COVID-19, remote consultations normalized the idea of clinical care via video. rPPG extends what is clinically possible in that setting. Mindset Medical's IVC app explicitly targets this use case.

Insurance underwriting and wellness programs

Insurance companies face a persistent problem: accurately assessing health risk at enrollment or periodic review without expensive in-person examinations. A 35-second smartphone scan that produces vital sign data has commercial value here. Binah.ai's enterprise deployments are substantially in this category.

The key constraint in this use case is data integrity: can the insurer trust that the measurement was taken by the applicant under conditions representative of their resting state? Anti-spoofing and measurement protocol validation become important product requirements.

Driver monitoring systems

Drowsiness and medical events while driving are significant safety problems. In-cabin cameras, now standard in many vehicles for driver attention monitoring, can also perform rPPG. The rPPG driver monitoring segment was valued at $412 million in 2024 with an 18.2% CAGR, driven by fleet safety requirements and regulatory pressure in commercial trucking and aviation.

This use case runs continuously and in highly variable lighting conditions, which stresses current rPPG algorithms harder than any other deployment context.

Hospital and clinical settings

Contactless monitoring in hospital rooms reduces the infection risk from sensor placement and removal, reduces patient discomfort, and enables continuous monitoring without wearable hardware. The population here includes post-surgical patients, ICU patients, and burn patients where contact sensors are particularly difficult to apply. Singapore General Hospital's evaluation study (JMIR Formative Research, June 2025) represents this direction.

Consumer wellness

Smartphones measuring resting heart rate, HRV, or respiratory rate as part of a daily health routine. This is the highest-volume potential deployment but also the lowest-accuracy context: variable lighting, frequent motion, and no controlled measurement protocol. Consumer wellness is not currently an FDA clearance target, and accuracy claims need to be modest.

Pharmacy and retail health kiosks

NuraLogix's MagicMirror product targets this directly. A face scan at a pharmacy kiosk provides a quick health snapshot that could guide referrals or supplement annual physicals. This requires neither clinical-grade accuracy nor 510(k) clearance if positioned as wellness screening rather than diagnosis.

Occupational health monitoring

IntelliProve's real-world deployments include occupational health contexts: measuring employee stress and fatigue indicators in high-demand work environments. The ethical and privacy dimensions here are more complex than clinical or consumer contexts, because the employer-employee relationship changes what "voluntary" means for consent.

9. Challenges and limitations

The honest picture of rPPG in 2026 includes real limitations that marketing materials tend to minimize.

Motion artifacts

Head movement is the single largest source of error. Even slight nodding or turning introduces intensity changes far larger than the pulsatile signal. Deep learning models have improved motion robustness substantially, but they have not solved it. In free-living conditions with natural head motion, accuracy degrades to MAE values of 5-10 BPM for many systems.

The standard workaround is to instruct users to sit still during measurement. This works for structured measurements (a telehealth consultation, an insurance onboarding scan) but rules out continuous monitoring in most realistic scenarios.

Illumination variability

Office lighting, daylight from windows, fluorescent flicker, and mixed color temperature lighting all stress rPPG algorithms. The December 2025 Nature Digital Medicine study showed accuracy drops below 50 lux for most systems. Outdoor use, especially in direct sunlight creating hard shadows, is a particularly difficult environment.

Infrared cameras sidestep some of this by working in a spectral range less affected by visible lighting variation, but they require different hardware than what most deployment targets carry.

Video compression artifacts

Most cameras compress video using codecs like H.264 that treat small, spatially distributed color changes as noise to be eliminated. The rPPG signal is, by definition, a small, spatially distributed color change. Compression ratios typical of video streaming (consumer smartphones, WebRTC video calls) can destroy a significant fraction of the pulsatile signal.

This constraint is underappreciated. Researchers typically work with raw or minimally compressed video. Deployed products work with whatever the camera hardware and OS pipeline output. Some companies handle this by processing at the raw sensor level before compression, but that requires deep camera integration that most SDK-based deployments cannot achieve.

Skin tone bias

As covered in Section 4, the 30-50% accuracy gap for darker skin tones documented by Nowara et al. (2020) is real and not fully solved. The industry is making progress, but a product that performs materially worse for darker-skinned users is a clinical equity problem, regardless of how impressive the aggregate accuracy number looks.

Any rPPG product used in a clinical context should publish accuracy data stratified by Fitzpatrick skin type. If a vendor cannot provide this, that is a meaningful gap.

The regulatory gap

Pulse rate and respiratory rate are cleared. Everything else is not. Blood pressure, SpO2, HRV, and metabolic biomarkers are commercially available in several products but are either labeled "investigational" in the US or sold in wellness contexts that avoid FDA oversight.

This creates a confusing landscape for clinicians, patients, and procurement teams. A product can be partially cleared while offering uncleared features under the same interface, and distinguishing which claims are regulatory-backed requires reading the fine print carefully.

Privacy

rPPG requires continuous video capture of the face. In clinical contexts, consent is reasonably handled. In ambient monitoring contexts (workplaces, retail, vehicles), the privacy implications are substantial. Continuous biometric monitoring without clear consent and purpose limitation raises legitimate concerns that the technical community tends not to address.

Data minimization approaches, where the rPPG signal is extracted locally and only the vital sign output (not the video) leaves the device, help but do not resolve the consent question.

10. The road ahead: 2026 and beyond

The rPPG field in 2026 has solved the easy problems and is now working on the hard ones.

What has been established

Heart rate and respiratory rate measurement by camera works, has been validated in clinical studies, and now has FDA regulatory clearance as Class II SaMD. The algorithms, both classical and deep learning, are well understood. The benchmark datasets exist. The research community has tooling (rPPG-Toolbox) for reproducible evaluation. Several companies have working commercial deployments with real users.

This is not nothing. Three years ago, the FDA 510(k) path for camera-based vital signs was untested. Now it has precedent, and that precedent makes subsequent clearances faster and cheaper to obtain.

Where the work is

HRV from camera video is the most technically mature uncleared indication. The inter-beat interval timing requirements are demanding, but the physiological mechanism is clear and the research base is deep. A well-resourced company with a clinically validated dataset could reasonably pursue 510(k) clearance for HRV within the next two years.

Blood pressure is the commercially highest-value target and the hardest to clear. The mechanism is contested, the accuracy variance is high, the skin tone equity requirements will demand large and diverse validation cohorts, and the FDA's bar will be high because inaccurate blood pressure readings carry genuine patient risk. This is a five-year problem, not a two-year one.

SpO2 from camera requires hardware and algorithm advances that are not yet mature. The visible-light approach is limited by melanin interference. NIR-augmented or multi-spectral camera solutions exist but require hardware not present in standard devices.

Continuous monitoring requires solving motion artifacts well enough to work in real-world conditions rather than structured measurement sessions. This is primarily an algorithmic problem, but it may also require tighter camera integration than current SDKs achieve.

Multimodal fusion is a genuine technical direction: combining rPPG with audio (voice analysis for stress and respiratory patterns), rBCG (Shen.AI's current approach), thermal imaging, or wrist-based PPG sensors. Fusion approaches can compensate for the limitations of any single modality and may enable higher accuracy on difficult vital signs like blood pressure.

The market trajectory

The remote patient monitoring market was $40 billion in 2023 and is projected to reach $88 billion by 2030, a 12% compound annual growth rate. AI-enabled remote monitoring specifically carries a 27.13% CAGR projection through 2034. PPG biosensors (contact and remote combined) were $549 million in 2024, forecast at $1.2 billion by 2032.

These numbers reflect a genuine shift in where healthcare monitoring happens: from clinical facilities to homes, vehicles, workplaces, and phones. rPPG is one technology in that shift. It has unique advantages in requiring zero new hardware for smartphone-based deployment, which is why the addressable market is large even at current accuracy levels.

The research questions that matter

Two Nature Digital Medicine papers published in 2025 addressed specific gaps: low-light performance and the role of face regions. The field is running out of clean lab validation papers and moving toward messier, more realistic evaluation environments. That is the right direction.

The skin tone equity question needs more than a technical fix. It needs diverse datasets built with deliberate recruitment, papers with stratified results as standard practice (not a supplementary table), and regulatory guidance that treats demographic performance disparities as a material safety issue.

The rPPG-Toolbox gave the field a standardized benchmarking environment. What comes next is a standardized real-world validation protocol: a study design that any company or academic group can follow to produce results comparable across laboratories and populations.

A practical summary for 2026

If you are evaluating rPPG for a product or clinical application, here is what the evidence actually supports.

Heart rate and respiratory rate measurement under controlled conditions (seated subject, adequate lighting, structured scan) have FDA regulatory precedent and achievable accuracy. MAE of 1-3 BPM for heart rate under good conditions, 3-8 BPM in real-world settings.

HRV, blood pressure, and SpO2 from camera are real research directions with commercial products, but none are FDA-cleared, and accuracy varies widely with conditions and skin tone. Treat these as screening indicators rather than clinical measurements.

Skin tone matters. Ask any vendor for accuracy data stratified by Fitzpatrick type. If they cannot provide it, that tells you something.

Motion robustness is the primary factor distinguishing research-grade accuracy from deployed-product accuracy. Test in conditions representative of your actual use case, not controlled lab settings.

The regulatory situation is moving faster than most observers expected in 2023. The precedents set in 2024-2025 make additional clearances, for more indications and more companies, more likely in 2026 and beyond.

ChatPPG Research Team covers remote photoplethysmography research, commercial developments, and clinical applications. This article was last reviewed March 2026.

References and further reading

Key publications cited

-

Verkruysse, W., Svaasand, L.O., & Nelson, J.S. (2008). Remote plethysmographic imaging using ambient light. Optics Express, 16(26), 21434-21445. DOI: 10.1364/OE.16.021434

-

Chen, W. & McDuff, D. (2018). DeepPhys: Video-Based Physiological Measurement Using Convolutional Attention Networks. ECCV 2018. arXiv: 1805.07888

-

Yu, Z., Li, X., & Zhao, G. (2019). Remote Photoplethysmograph Signal Measurement from Facial Videos Using Spatio-Temporal Networks. BMVC 2019. arXiv: 1905.02419

-

Liu, X., Hill, B., Jiang, Z., Patel, S., & McDuff, D. (2023). EfficientPhys: Enabling Simple, Fast and Accurate Camera-Based Cardiac Measurement. IEEE/CVF WACV 2023. arXiv: 2110.04447

-

Liu, X., Narayanswamy, G., et al. (2024). rPPG-Toolbox: Deep Remote PPG Toolbox. NeurIPS 2023. GitHub: ubicomplab/rPPG-Toolbox

-

Sakib, S. et al. (2025). A State-of-the-Art Survey of Remote Photoplethysmography for Contactless Health Parameters Sensing. WIREs Data Mining and Knowledge Discovery. DOI: 10.1002/widm.70039

-

Comprehensive review of heart rate measurement using rPPG and deep learning (2025). PMC. PMC12181896

-

Remote photoplethysmography for health assessment: a review informed by IntelliProve technology (2025). Frontiers in Digital Health. DOI: 10.3389/fdgth.2025.1667423

-

The reliability of remote photoplethysmography under low illumination and elevated heart rates (2025). npj Digital Medicine. DOI: 10.1038/s41746-025-02192-y

-

The role of face regions in remote photoplethysmography for contactless heart rate monitoring (2025). npj Digital Medicine. DOI: 10.1038/s41746-025-01814-9

-

Advancements in Remote Photoplethysmography (2025). MDPI Electronics, 14(5), 1015. DOI: 10.3390/electronics14051015

-

Remote photoplethysmography technology for blood pressure and hemoglobin level assessment (2025). JMIR Formative Research. DOI: 10.2196/60455

-

Nowara, E.M., et al. (2020). The effect of skin type on performance of rPPG models. Systematic evaluation of rPPG across Fitzpatrick skin types.

-

de Haan, G. & Jeanne, V. (2013). Robust pulse rate from chrominance-based rPPG. IEEE Transactions on Biomedical Engineering, 60(10), 2878-2886.

-

Wang, W., den Brinker, A.C., Stuijk, S., & de Haan, G. (2017). Algorithmic principles of remote PPG. IEEE Transactions on Biomedical Engineering, 64(7), 1479-1491.

Commercial players

- Binah.ai — Video-based health monitoring SDK

- Shen.AI — rPPG + rBCG multimodal sensing SDK

- NuraLogix (Anura) — Transdermal Optical Imaging platform

- PanopticAI — FDA-cleared contactless pulse rate

- Mindset Medical — Informed Vital Core app (FDA-cleared)

- FaceHeart — FH Vitals SDK (FDA-cleared for HR and RR)

- Philips Biosensing by rPPG — IP licensing program

- IntelliProve — rPPG health assessment platform

Open-source tools

- rPPG-Toolbox — Deep Remote PPG Toolbox (NeurIPS 2023)

- PyVHR — Python Video-based Heart Rate framework

- How rPPG Works: Camera-Based Vital Signs from Video

- Deep Learning for PPG Heart Rate Estimation

- PPG Blood Pressure Estimation Methods

- PPG Skin Tone Bias: How Melanin Affects Accuracy

- Transformer Architectures for PPG Signal Analysis

- PPG vs ECG: A Comprehensive Technical Comparison

- Smartphone Camera PPG: Measuring Vital Signs with Your Phone

- PPG Respiratory Rate Estimation

- PPG in the NICU: Neonatal Monitoring

- PPG Signal Quality Assessment

- Independent Component Analysis for PPG

- PPG Dataset Benchmarks for Research

- BCG vs PPG: Comparing Ballistocardiography and PPG

- PPG Stress Detection Methods

- PPG Emotion Recognition

- History of Photoplethysmography