Independent Component Analysis for rPPG: Separating Blood Flow from Motion

Independent Component Analysis (ICA) was one of the first rigorous approaches to extracting rPPG signals from video. Learn how ICA separates blood volume pulsation from motion and illumination artifacts.

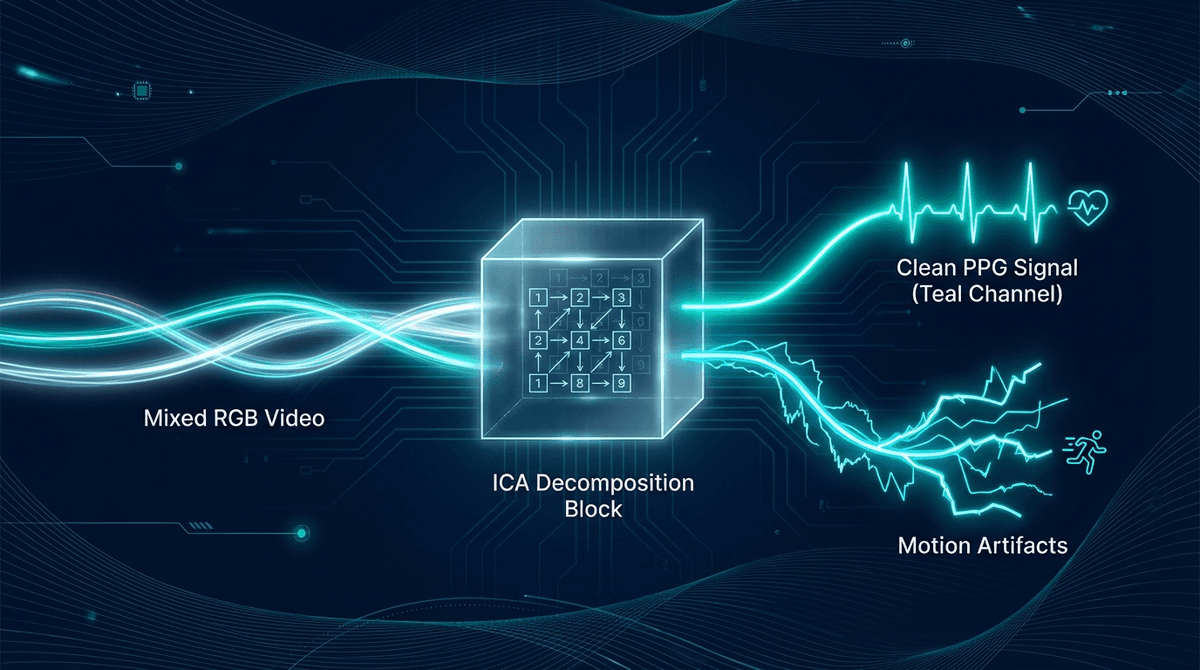

The raw pixel values of a face video contain a mixture of signals: blood volume pulsation, motion artifacts, illumination changes, skin specular reflections, and camera noise. The challenge of rPPG is isolating the physiological signal from this mixture without knowing in advance exactly what shape the cardiac signal will take or how large the artifacts will be.

Independent Component Analysis (ICA) offered one of the first principled mathematical solutions to this problem. Before deep learning transformed rPPG in the 2019-2023 period, ICA-based methods were among the best-performing approaches in the field. Understanding ICA for rPPG illuminates the signal-theoretic foundations that even modern neural network methods build upon.

The Mixing Problem in rPPG

Consider a camera measuring reflected light from three facial skin patches. Each patch produces a time series of pixel intensities — let's call them R(t), G(t), and B(t) from the RGB channels. These are the observed signals.

What generated these observations? The observed signals are, to a useful approximation, linear combinations of independent underlying source signals:

- Blood volume pulse (BVP): The periodic absorption change from hemoglobin pulsation

- Skin specular component: Direct reflection that varies with head movement and illumination angle

- Diffuse illumination variation: Slow changes in ambient light level

- Camera and shot noise: Temporally uncorrelated

- Motion artifact: Pixel intensity changes from skin displacement

The ICA formulation assumes that the observed signals are linear mixtures of independent sources, and attempts to recover those sources by finding a separating matrix that maximizes the statistical independence of the outputs.

This is formally identical to the cocktail party problem — separating individual voices from a room where multiple people speak simultaneously into multiple microphones.

The Poh et al. ICA Method (2010)

Poh, McDuff, and Picard at MIT published the foundational rPPG ICA paper in 2010 (DOI: 10.1364/OE.18.010762). Their approach:

- Select facial ROI: Detect face using Viola-Jones or similar algorithm, select a rectangular ROI over the forehead

- Extract channel means: Compute spatial mean of R, G, B channels over the ROI for each frame, producing three time series

- Preprocess: Apply a bandpass filter (0.75-4 Hz) to the three channel time series, then normalize to zero mean and unit variance

- Apply ICA: Use FastICA (Hyvärinen & Oja, 2000) to decompose the three channels into three independent components

- Select the BVP component: The component with the highest power in the heart rate frequency band (verified via spectral analysis) is selected as the cardiac signal

- Estimate heart rate: Apply FFT to the selected component and identify the dominant frequency

Poh et al. validated this method on 12 healthy subjects, achieving mean heart rate estimation error of 1.28 bpm — surprisingly accurate for a camera-based, non-contact approach in 2010.

What ICA Assumes (and Why It Sometimes Fails)

The ICA model has several important assumptions:

Linear mixing: The observed signals are linear combinations of sources. For rPPG, this is approximately true but not exact — the relationship between blood volume and pixel intensity involves nonlinear terms from the tissue optics.

Statistical independence of sources: The underlying sources must be statistically independent. Blood volume pulsation is indeed largely independent of head motion. However, motion artifacts correlated with breathing (which is somewhat coupled to cardiac activity) violate this assumption.

Sufficient signal sources: With only three channels (R, G, B), ICA can separate at most three independent components. If the mixing scenario involves more than three independent sources, the separation is incomplete.

Stationarity: Standard ICA assumes the mixing matrix is constant over the analysis window. But if the illumination changes rapidly or the face moves substantially, the mixing matrix changes, violating the stationarity assumption.

These limitations explain why ICA-based rPPG, while effective in controlled conditions, degrades more than modern methods under motion and illumination variability. The CHROM and POS algorithms (de Haan & Jeanne, 2013; Wang et al., 2017) improved on ICA specifically by incorporating explicit skin chrominance models that provide better constraints than the ICA independence assumption alone.

Spatial ICA: Using Multiple ROIs

An extension of the basic ICA approach uses multiple facial ROIs as separate channels, increasing the number of observations available for source separation.

Instead of just R, G, B channel means, spatial ICA might use 15-20 ROIs (sub-regions of the face: forehead left, forehead right, each cheek, nose, chin) with their own channel means. This gives a 15-60 dimensional observation vector that ICA can operate on, potentially recovering more refined source separations.

The tradeoff is that with more ROIs, the assumption that they all observe the same mixing scenario weakens — different face regions have different vascular density, depth, and motion characteristics.

ICA Variants for rPPG

Temporal ICA with Sliding Windows

Rather than applying ICA to the entire video segment, sliding window ICA applies the decomposition over overlapping time windows (typically 10-30 seconds). This adapts to non-stationary mixing scenarios at the cost of computational complexity.

A new ICA solution is estimated for each window, and heart rate is estimated as the dominant frequency in the selected component for that window. The final heart rate estimate is smoothed across windows.

Constrained ICA

Standard ICA selects the component with highest spectral power in the heart rate band, but this selection is heuristic. Constrained ICA formulations incorporate prior knowledge about the cardiac signal — its expected frequency range, spectral shape, or spatial distribution — as constraints that guide the decomposition toward physiologically meaningful solutions.

Zhang et al. (2015, DOI: 10.1109/TNSRE.2015.2416394) demonstrated constrained ICA for rPPG that achieved 20-30% lower heart rate estimation error than standard ICA under motion conditions by constraining the solution to have the spectral properties expected of a cardiac signal.

Joint Temporal-Spatial ICA

This approach performs ICA jointly across both time and spatial dimensions, simultaneously factoring out shared temporal patterns and spatial patterns. This can separate specular reflections (which have distinctive spatial patterns) from diffuse absorptive changes (which are more spatially uniform) more cleanly than temporal ICA alone.

Relationship to CHROM and POS Algorithms

The CHROM algorithm (de Haan & Jeanne, 2013, DOI: 10.1109/TBME.2013.2266196) can be understood as a constrained ICA where the mixing model is explicitly defined by the skin chrominance assumption. Rather than letting ICA discover the mixing matrix from data, CHROM hard-codes a theoretically derived mixing model based on how blood absorption changes the green-red ratio of reflected light.

This is a form of Model-Based ICA — trading the generality of blind source separation for the stability and robustness of a physically motivated model. Under stable illumination conditions, model-based approaches like CHROM typically outperform blind ICA because the model provides better constraints. Under highly variable conditions where the model is violated, the advantage can reverse.

The POS (Plane of Skin) method (Wang et al., 2017, DOI: 10.1109/TBME.2016.2609283) extends this model-based approach with a more general illumination-invariant formulation, achieving state-of-the-art performance in its era and remaining competitive even as deep learning methods emerged.

Deep Learning as Learned Source Separation

Modern neural network rPPG methods can be interpreted as learned source separation — the network implicitly learns to identify and suppress the non-cardiac components of the video signal (motion, illumination) and amplify the cardiac component.

The end-to-end learning allows the network to discover optimal separation functions that go beyond the linear mixing assumption of classical ICA. The cost is interpretability and requirement for training data.

Several hybrid approaches combine explicit ICA-based preprocessing with neural network refinement — using ICA to roughly separate the cardiac and motion components, then applying a neural network to refine the cardiac estimate. This combines the interpretability and sample efficiency of model-based approaches with the expressiveness of learned methods.

When to Use ICA-Based rPPG

For practical applications, ICA-based methods remain relevant in:

- Embedded systems with limited memory: Classical ICA has low parameter count and runtime compared to neural networks, making it practical on microcontrollers and resource-constrained edge processors

- Research scenarios requiring interpretability: ICA provides an explicit decomposition that researchers can analyze and validate; neural networks don't offer equivalent interpretability

- Cross-dataset generalization without retraining: Classical methods generalize to new populations without dataset-specific fine-tuning, while neural networks often require adaptation

- No training data available: New deployment contexts without labeled training data favor model-based classical methods

For maximum accuracy in well-resourced deployments with diverse populations and challenging conditions, neural network methods generally outperform ICA today. The two approaches are complementary rather than mutually exclusive.

- rPPG Algorithms Deep Dive — comprehensive algorithm overview including CHROM and POS

- PPG Independent Component Analysis — ICA in contact PPG context

- rPPG vs Contact PPG Accuracy — accuracy landscape

- PPG Deep Learning Heart Rate — modern ML approaches

- Facial PPG Signal Extraction — ROI selection and signal extraction

Frequently Asked Questions

What is ICA in rPPG? Independent Component Analysis (ICA) is a signal processing technique that separates a mixture of signals into independent components. In rPPG, ICA decomposes the RGB camera signal from facial video into independent sources, one of which is the blood volume pulse signal used to estimate heart rate.

Why was ICA the preferred rPPG method before deep learning? ICA provided a principled mathematical framework for blind source separation that worked reasonably well in controlled conditions without requiring training data. It offered interpretable components and low computational requirements. Before large labeled video datasets and GPU computing made deep learning practical, ICA was among the best available approaches.

What are the limitations of ICA for rPPG? ICA assumes linear mixing of statistically independent sources. Real rPPG scenarios violate these assumptions: the light-tissue interaction is nonlinear, motion and respiration create partially correlated artifacts, and the mixing conditions change over time. These violations explain ICA's performance degradation under motion and variable lighting compared to model-based and learned methods.

How does ICA compare to CHROM for rPPG? CHROM uses a theoretically motivated skin chrominance model (a form of constrained ICA) that typically outperforms blind ICA in stable illumination. ICA is more general and can adapt to illumination conditions CHROM's model doesn't capture, but at the cost of higher variance in individual estimates.

Is ICA still used in rPPG research? ICA appears in current research primarily as a baseline comparison method, a preprocessing step in hybrid systems, or a component of edge-deployable rPPG systems where neural network inference is computationally prohibitive. It's no longer the state of the art for maximum accuracy but remains practically relevant.