Temporal Convolutional Networks for PPG: Why TCNs Still Matter

Temporal convolutional networks give PPG teams a strong alternative to transformers by modeling long waveform context with causal or dilated convolutions, lower compute, and stable training on wearable signals.

Temporal convolutional networks, or TCNs, remain one of the most practical model families for photoplethysmography because they capture long waveform context with causal or dilated convolutions, train more stably than many recurrent setups, and often deliver strong accuracy at a much lower compute cost than transformers. For wearable PPG tasks such as heart rate estimation, rhythm screening, signal quality assessment, and sleep or stress inference, that combination of temporal coverage, efficiency, and deployment friendliness still matters a lot.

Transformer papers dominate attention in time series machine learning, and some of that attention is deserved. Self-attention can model very long relationships and can work well when datasets are large, labels are rich, and infrastructure is generous. But PPG teams do not always live in that world. They often work with noisy wrist signals, modest datasets, battery-constrained devices, latency targets, and pipelines that must survive motion, missing beats, skin tone variation, and sensor placement changes.

That is exactly why TCNs still deserve a serious place in the toolkit.

A well-designed TCN can cover hundreds or thousands of samples, preserve temporal ordering, avoid recurrent bottlenecks, and fit comfortably into edge or near-edge systems. In many wearable settings, the right question is not whether a transformer is more fashionable. The right question is which architecture solves the signal problem reliably with the least operational pain.

What a TCN actually does

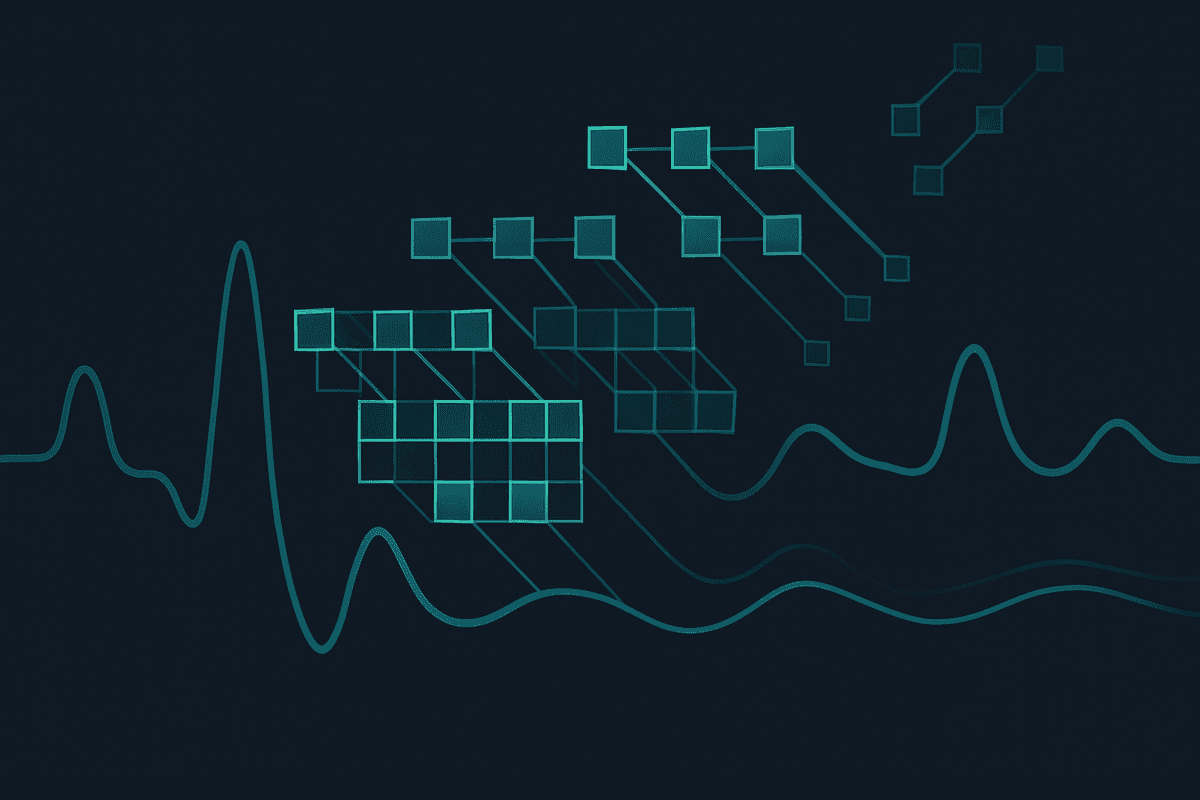

A TCN is a convolutional architecture for sequence modeling. The core idea is simple: instead of looking at a signal through recurrent state updates or global self-attention, the model applies 1D convolutions across time. To make this work well for sequence tasks, modern TCNs typically use three ingredients:

- Causal convolutions, so predictions at time step t only depend on the present and past.

- Dilated convolutions, so the receptive field grows exponentially with depth instead of linearly.

- Residual blocks, so deeper temporal stacks remain trainable.

If the kernel size is k and dilation grows like 1, 2, 4, 8, and so on, the receptive field expands quickly without requiring huge filters. That gives the network access to long-range timing structure such as beat-to-beat intervals, respiratory modulation, pulse morphology changes, and motion-corrupted stretches that unfold over seconds rather than milliseconds.

For PPG, this is a very natural fit. PPG is a waveform. Local morphology matters, but broader context matters too. A single pulse tells part of the story. A run of pulses over several seconds often tells much more.

Why PPG is a good match for TCNs

Wearable PPG is messy in predictable ways. The signal is quasi-periodic, strongly time-dependent, and frequently distorted by motion, contact pressure, ambient light, and device-specific sampling behavior. A useful model must do at least two things at once:

- detect short-term pulse features such as systolic upstroke, peak timing, pulse width, and local amplitude changes

- aggregate context across longer windows to separate physiological variation from artifact

TCNs do both efficiently.

Early convolutional layers learn local morphology. Deeper dilated layers accumulate broader context. That makes TCNs especially effective when labels depend on a blend of instant waveform shape and slower temporal patterning.

Consider a few common PPG tasks:

Heart rate and pulse rate estimation

Heart rate from PPG is not just peak counting. In real wearable data, peaks get flattened, doubled, shifted, or obscured by motion. A TCN can use surrounding beats to infer the likely rhythm even when a short region is ambiguous. If you are also comparing other deep learning approaches for rate estimation, our overview of deep learning heart rate estimation gives the broader landscape.

Signal quality assessment

Quality labels often depend on consistency over several beats. One clean-looking pulse inside a noisy five-second segment does not make the segment reliable. Dilated convolutions let the model judge temporal stability without an expensive attention map.

Arrhythmia or rhythm screening

Irregular intervals and morphology changes emerge over time. A TCN can capture these sequential patterns directly, especially when combined with careful windowing and calibration.

Sleep, stress, or autonomic inference

These tasks often rely on trends in pulse intervals, amplitude variation, respiratory coupling, and subtle morphology changes across longer contexts. Again, this plays to the strengths of temporal convolutions.

Why TCNs can be a better practical choice than transformers

Transformers are powerful, but wearable PPG creates a specific engineering environment. In that environment, TCNs often win on the variables that product teams care about most.

1. Lower compute and memory pressure

Self-attention has a cost profile that becomes expensive as sequence length grows. PPG windows can become long fast, especially when you preserve high sampling rates or stack multiple channels such as raw PPG, derivatives, accelerometer, or quality masks. TCNs scale more predictably and usually require less memory for comparable context lengths.

That matters during both training and deployment. Smaller memory footprints allow larger batch sizes, faster iteration, and easier on-device inference.

2. Stable training on smaller datasets

Wearable biomedical datasets are rarely as large or as clean as mainstream foundation-model corpora. TCNs tend to behave well in lower-data regimes, especially when paired with good preprocessing, augmentation, and normalization. They have fewer moving parts than transformer stacks and often need less hyperparameter drama to converge to something useful.

3. Strong inductive bias for local temporal structure

PPG is not arbitrary text. Nearby samples are highly meaningful, and local patterns repeat with variation across beats. Convolutional filters encode this prior efficiently. Transformers can learn it too, but they may spend more capacity rediscovering structure that convolutions assume naturally.

4. Easier path to edge deployment

Many PPG products live on watches, rings, patches, or phones. Those environments reward compact models, predictable latency, and efficient streaming. Causal TCNs are well suited to incremental inference. They can process rolling windows with less overhead than a full attention mechanism.

If your end goal is a wearable feature that has to ship, not just a benchmark result, this is a serious advantage.

For teams exploring the other side of the tradeoff, our guide to PPG transformer models covers when attention-heavy architectures make sense.

TCNs line up well with the way PPG pipelines are built

Most production PPG systems already rely on stage-wise thinking. They denoise, segment, normalize, featurize, classify, or regress across windows. TCNs slot naturally into these pipelines.

A common pattern looks like this:

- band-limit or denoise raw PPG

- synchronize or align with accelerometer and labels

- split into fixed windows, often with overlap

- normalize per window, channel, or subject

- feed the sequence to a temporal model

- smooth or calibrate outputs at the session level

TCNs are flexible at step 5. They can consume raw waveform segments directly, or they can process lightly engineered channels such as first derivative, second derivative, signal quality masks, or accelerometer context.

They also pair well with classical preprocessing. That is useful because PPG often benefits from targeted cleanup before modeling. For example, wavelet-based approaches can reduce artifact and baseline issues before a TCN ever sees the waveform. We cover that workflow in more detail here: wavelet denoising for PPG.

Design choices that matter most for PPG TCNs

Not every temporal convolution stack is equally good. A practical PPG TCN usually succeeds because of a few disciplined design choices.

Receptive field sizing

The receptive field should match the physiology and the task. A model estimating instantaneous heart rate from a clean finger sensor may need less context than a wrist-worn arrhythmia screen under motion. If sampling is 50 Hz and you want the model to reason over about 10 seconds, the network needs a receptive field covering roughly 500 samples. Dilations make this feasible without making the network huge.

Causal versus non-causal mode

For real-time inference, causal TCNs are the right default because they do not peek into the future. For offline analysis, non-causal or centered convolutions may improve accuracy because both left and right context are available. Teams should choose this explicitly instead of mixing evaluation settings.

Residual and skip connections

Residual pathways help optimization and preserve fine-scale information. Skip connections from multiple temporal scales can also help, especially when both beat-level and window-level cues matter.

Normalization strategy

Batch normalization is common, but it is not always ideal when batch sizes are small or subject distributions vary widely. Layer normalization, weight normalization, or channel-wise standardization can be more stable in some wearable setups.

Multi-channel input

PPG rarely exists alone in a wearable. Motion signals are often available and often critical. A TCN that ingests PPG plus accelerometer channels can learn to discount motion-corrupted regions more intelligently than a single-channel model.

Loss shaping

Biomedical targets are often imbalanced or noisy. Classification tasks may benefit from focal loss or class-balanced sampling. Regression tasks may improve with robust losses such as Huber loss when label noise is substantial.

TCNs help when labels are imperfect

A less glamorous but very real reason to like TCNs is that wearable labels are often messy. Ground truth heart rate can be temporally misaligned. Stress labels may be coarse. Sleep stage labels may come from a different sensor stream. Arrhythmia annotations may be sparse.

In these cases, model simplicity is valuable. TCNs offer enough temporal expressiveness to learn useful structure without demanding huge annotation budgets or extremely careful pretraining schemes. They are often easier to debug too. When a TCN fails, you can usually inspect window length, receptive field, preprocessing, class balance, or dilation schedule. Those are concrete levers.

With large transformer systems, failures can be harder to attribute. Was the issue tokenization of patches, positional encoding choice, dataset scale, augmentation mismatch, optimization instability, or plain overcapacity? Sometimes the answer is yes to several of them.

Where transformers still have the edge

TCNs still matter, but they are not universal winners.

Transformers can outperform TCNs when:

- sequence relationships are very long and heterogeneous

- multimodal fusion is central

- pretraining on large unlabeled corpora is available

- the dataset is big enough to support high-capacity models

- interpretability through attention patterns is useful for analysis, even if imperfectly

This is why the best strategy is often comparative rather than ideological. Use TCNs as a strong baseline and often as a production candidate. Bring in transformers when the problem scale, data regime, and deployment budget justify them.

For many PPG teams, that comparison is eye-opening. The transformer may win a leaderboard by a small margin, but the TCN may be far cheaper, easier to tune, and more reliable under domain shift. In actual product environments, that can be the better answer.

A realistic recipe for a wearable PPG TCN

If you wanted a sensible starting point today, it might look like this:

- input: 1 to 4 channels, such as raw PPG, first derivative, accelerometer magnitude, and a mask or quality feature

- window: 8 to 20 seconds depending on task

- backbone: 6 to 10 residual TCN blocks

- kernel size: 3 to 5

- dilation schedule: powers of 2 repeated once or twice

- hidden width: moderate, not huge

- output head: regression or classification layer with light pooling

- training: strong augmentation, subject-level splits, calibration on held-out users

Augmentations matter. Amplitude scaling, time masking, light temporal warping, noise injection, and motion-corruption simulation can all improve robustness when they reflect real device behavior.

Evaluation protocol matters just as much. Random segment splits can overstate performance because adjacent windows from the same session are highly correlated. Subject-level or session-level splits are much more honest for wearable deployment.

Why this matters commercially, not just academically

A model choice becomes strategic when it affects battery, latency, cloud spend, retraining effort, and regulatory documentation. TCNs are attractive because they keep the stack manageable.

A smaller temporal model can be easier to validate, compress, quantize, and monitor. It can support more frequent experimentation because training cycles are shorter. It can also leave room for ensembling, calibration, or a signal-quality gate without blowing the compute budget.

In healthcare and consumer wellness, operational reliability often beats architectural novelty. Teams need models that survive sensor updates, demographic shifts, firmware changes, and imperfect real-world wear behavior. TCNs are not magic, but they are a very grounded solution to those constraints.

That is why they still matter. They solve an important middle ground between classical signal processing and large attention models. They are expressive enough for complex wearable tasks and lean enough for real deployment.

FAQ

What is the main advantage of a TCN for PPG?

The main advantage is efficient long-context modeling. TCNs can learn local pulse morphology and longer temporal dependencies at the same time, while keeping compute and memory demands relatively modest.

Are TCNs better than transformers for every PPG task?

No. Transformers may do better when datasets are large, multimodal context is rich, or very long and variable dependencies dominate the task. But TCNs are often the better practical starting point for wearable PPG because they are simpler, cheaper, and easier to deploy.

Can a TCN run in real time on a wearable or phone?

Often yes. Causal temporal convolutions are a good fit for streaming inference, and compact TCNs can be quantized or optimized for mobile and embedded environments more easily than larger attention models.

How much context should a PPG TCN see?

That depends on the task, sampling rate, and label definition. Many wearable tasks benefit from several seconds of context. The right receptive field should be chosen deliberately so the network can capture multiple beats and slower modulation patterns.

Should I denoise PPG before feeding it into a TCN?

Usually yes, or at least compare denoised and raw-input variants. TCNs can learn robustness, but good preprocessing still helps. Filtering, artifact suppression, and quality-aware windowing often improve both training stability and generalization.

What should I compare a TCN against?

At minimum, compare it against a classical feature-based baseline, a simpler CNN, and if resources allow, a transformer baseline. The goal is not to prove one architecture is universally best. The goal is to find the best accuracy-to-complexity tradeoff for your device and task.

References

- Bai, Kolter, and Koltun. An Empirical Evaluation of Generic Convolutional and Recurrent Networks for Sequence Modeling. https://arxiv.org/abs/1803.01271

- A recent review of deep learning for photoplethysmography applications. https://arxiv.org/abs/2211.14734

- Schwab et al. Beat by beat: Classifying cardiac arrhythmias with recurrent neural networks. https://doi.org/10.1038/s41746-019-0136-7