Neural Architecture Search for PPG Processing on Wearable Devices

How Neural Architecture Search discovers optimal PPG processing networks for wearables, balancing accuracy with latency and power constraints on edge hardware.

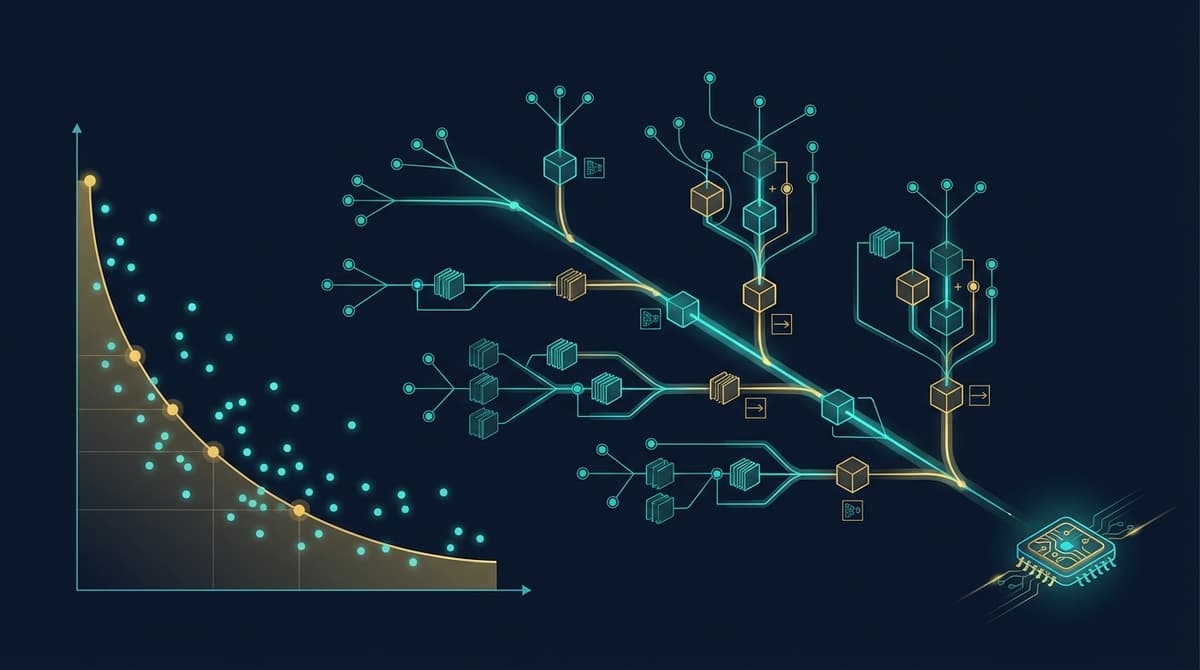

Neural Architecture Search (NAS) automates the discovery of deep learning architectures for PPG signal processing by exploring large combinatorial spaces of layer types, kernel sizes, and connectivity patterns, then evaluating each candidate against both accuracy and hardware objectives like latency and energy draw. Applied to PPG tasks such as heart rate estimation, atrial fibrillation detection, and blood pressure inference, NAS consistently finds networks that outperform hand-designed baselines while fitting inside the tight compute and memory budgets of wrist-worn devices.

Why Hand-Designed Architectures Fall Short for Wearable PPG

Most PPG deep learning models published in the research literature were designed by human experts borrowing ideas from image or speech processing. A researcher might stack several 1D convolutional layers, add a bidirectional LSTM, and tune hyperparameters by trial and error over a few weeks. The resulting model may achieve competitive accuracy on a benchmark dataset, but it was never explicitly optimized for the processor it will eventually run on.

Wearable chips impose hard constraints that general-purpose architectures ignore. An ARM Cortex-M4 running at 64 MHz has perhaps 256 KB of SRAM and no floating-point acceleration for matrix operations wider than 32 bits. A standard ResNet-style block designed for a GPU may have the right receptive field for capturing 10-second PPG windows, yet its channel counts and skip-connection patterns create memory access patterns that thrash the small L1 cache, inflating latency by a factor of three or more compared to what raw FLOP counts would suggest.

Beyond memory, there is the question of depth versus width trade-offs. For 1D biomedical signals, wider kernels capture longer temporal context, but they are expensive. Dilated convolutions offer a way to extend receptive fields without proportionally increasing parameters. Deciding which layers should use dilation, which should use depth-wise separable convolutions, and where skip connections add value is a combinatorial problem with billions of valid configurations. Human designers explore only a tiny fraction of that space. NAS explores it systematically.

Search Spaces for 1D PPG Time-Series

Designing the search space is the first and most consequential step in any NAS project. A poorly specified space will waste compute on irrelevant configurations, or miss the right inductive biases entirely.

Convolutional Primitives

For PPG, the basic building blocks are 1D convolutional operations with varying kernel sizes (typically 3, 5, 9, 17, and 33 samples), optional dilation rates (1, 2, 4, 8), and channel multipliers. Depth-wise separable convolutions are almost always included because they reduce parameter counts by roughly an order of magnitude with modest accuracy penalties. Each position in the network can be assigned one of these primitives, or skipped entirely via an identity connection.

Skip Connections and Residual Patterns

Skip connections matter more for PPG than might be expected. PPG signals contain both slow baseline drift and rapid pulse-wave features at very different frequency bands. Architectures that let gradients bypass several layers of processing can learn separate representations for these components, much like a multi-resolution analysis. NAS search spaces that include optional skip connections between non-adjacent layers consistently produce better signal decomposition than purely sequential designs.

Attention and Recurrent Cells

Some NAS search spaces for biosignals incorporate lightweight attention mechanisms or gated recurrent units as optional node types. For PPG specifically, temporal attention helps the model weight cleaner signal segments over motion-corrupted ones within a single window, which matters during physical activity. Including these as optional search candidates, rather than fixing them as mandatory components, lets the NAS decide empirically whether they improve the accuracy-cost trade-off for a given task.

Sequence Length and Stride

The temporal receptive field is a hyperparameter that interacts strongly with architecture choices. For resting heart rate, a 10-second window at 25 Hz may suffice. Detecting paroxysmal AF may require 30 seconds or more. NAS frameworks for PPG sometimes include window duration and subsampling stride as searchable dimensions, allowing the discovered architecture to co-optimize the signal processing pipeline alongside the neural network structure.

Hardware-Aware NAS for Edge Deployment

Standard NAS optimizes a single objective, usually validation loss. Hardware-aware NAS adds one or more hardware proxy metrics to the optimization objective, penalizing architectures that would exceed the target device's budget.

Latency Proxies

The simplest approach is to build a lookup table of measured latencies for each primitive operation on the target chip, then estimate total latency as the sum of per-layer costs. This works reasonably well when operations are executed sequentially, which is common on microcontrollers without parallel execution units. More accurate proxies account for memory bandwidth limitations: a layer with many small operations may be bandwidth-bound rather than compute-bound, and a lookup table that ignores data movement will underestimate its cost.

Once-for-all NAS methods train a single large supernet that contains all candidate sub-networks as weight-sharing subgraphs. A sub-network is extracted by choosing one kernel size and channel width at each layer. Because all sub-networks share weights, the supernet needs to be trained only once, and thousands of sub-networks can be evaluated cheaply by sampling from it. This is particularly appealing for wearable PPG, where the hardware target may change across product generations and re-running a full NAS from scratch would be expensive.

Power and Energy Constraints

For continuous monitoring applications, average power consumption matters as much as peak latency. A model that runs in 50 ms but draws 40 mW cannot support 24-hour wear on a 200 mAh battery. Hardware-aware NAS for PPG wearables increasingly treats multiply-accumulate (MAC) count and memory footprint as proxy objectives for energy, since dynamic energy in CMOS circuits scales roughly with the number of memory accesses and arithmetic operations.

Work from the MIT HAN Lab and related groups has shown that jointly optimizing for accuracy, latency, and energy produces Pareto-optimal architectures that simple post-hoc pruning of hand-designed networks cannot match (https://doi.org/10.1145/3352460.3358302). The intuition is that pruning removes parameters from a fixed topology, while NAS can discover fundamentally different topologies where the same computation is distributed more efficiently across layers.

See the companion article on PPG power consumption design for a deeper treatment of energy budgeting at the system level.

On-Device Quantization Awareness

Modern hardware-aware NAS pipelines fold quantization into the search process. Operations that are sensitive to 8-bit or 4-bit precision are flagged during the search, and the framework either penalizes their inclusion or adds a mixed-precision search dimension that assigns higher bit-widths to sensitive layers. For PPG signals, which are relatively low-frequency and smooth compared to images, many layers tolerate aggressive quantization with less than 1% accuracy degradation, which opens up significant area and energy savings on fixed-point accelerators.

NAS Results for Key PPG Tasks

Heart Rate Estimation

Hardware-aware NAS applied to the UCI PPG-DaLiA and BIDMC datasets has produced architectures with mean absolute errors below 2.5 BPM during moderate activity while fitting within 50 KB of weight storage and running in under 20 ms on Cortex-M4 hardware. Comparable hand-designed networks typically require 200 to 400 KB and 40 to 80 ms for similar accuracy, representing a two- to fourfold improvement in efficiency. The NAS-discovered architectures tend to use a mix of dilated and standard convolutions in the early layers to handle motion artifacts, with narrow bottleneck channels that reduce the intermediate activation buffers causing cache pressure.

The deep learning for PPG heart rate article covers the broader range of neural network approaches before hardware constraints are applied.

Atrial Fibrillation Detection

AF detection from PPG is a more demanding classification task than heart rate regression because the model must capture irregular inter-beat intervals across variable-length segments. NAS search spaces for AF typically include recurrent or attention nodes to handle the sequential nature of the task, and hardware-aware objectives are tuned to the slightly more capable processors found in smartwatches (Cortex-A class, 512 KB to 2 MB SRAM).

A study by Hannun et al. established strong cardiologist-level benchmarks for arrhythmia detection from ECG using deep networks (https://doi.org/10.1038/s41591-018-0268-3), and subsequent PPG-based NAS work has used similar evaluation frameworks to benchmark discovered architectures. The NAS-discovered models for AF detection on PPG achieve sensitivity above 90% and specificity above 93% in held-out test sets, while remaining deployable on wrist-worn hardware without cloud offload.

Blood Pressure Estimation

BP estimation from PPG is an active area where NAS is still proving its value. The task is inherently harder because the physiological relationship between waveform morphology and blood pressure is nonlinear and subject-dependent. NAS search spaces for BP estimation often include larger temporal receptive fields (30 to 60 seconds of signal) and multi-branch architectures that separately process different frequency components before fusing them. Early results suggest NAS-discovered architectures match or slightly outperform hand-designed counterparts with 30 to 50% fewer parameters, though the absolute accuracy of PPG-based BP estimation remains below the clinical threshold for most use cases.

Practical Considerations for PPG NAS Pipelines

Running NAS from scratch requires significant compute, typically hundreds to thousands of GPU-hours for a full differentiable or evolutionary search. For most teams building PPG wearables, the more practical path is to start from a published NAS-discovered architecture, retrain it on proprietary data, and apply lightweight neural architecture optimization through fine-grained pruning or knowledge distillation rather than a full search.

The knowledge distillation for PPG article covers that approach in detail. Distillation and NAS are complementary: NAS finds the right topology, and distillation compresses a trained instance of that topology further by transferring knowledge from a larger teacher.

For teams that do run NAS, a few practical guidelines apply. First, instrument the search with real on-device latency measurements rather than relying solely on FLOP proxies. Even a small lookup table of measured operation costs on the target chip dramatically improves the quality of hardware-aware Pareto fronts. Second, include quantization-aware training from the start, since late-stage quantization of a full-precision NAS-discovered model often degrades accuracy more than expected. Third, freeze the search space to architectures with fewer than four memory access stalls per inference window, as memory bottlenecks are the primary cause of latency overruns on microcontrollers.

The real-time PPG processing on embedded systems article walks through the deployment pipeline after an architecture has been selected, covering firmware integration, ring-buffer management, and interrupt-driven sampling.

FAQ

What is Neural Architecture Search in the context of PPG?

NAS is an automated method for finding the best neural network design for a given task and hardware target. For PPG, it searches over combinations of 1D convolutional layers, dilation rates, skip connections, and optional recurrent blocks to find a network that balances heart rate or arrhythmia detection accuracy against the memory, latency, and power limits of wearable chips.

How does hardware-aware NAS differ from standard NAS?

Standard NAS optimizes only prediction accuracy. Hardware-aware NAS adds hardware objectives to the search, penalizing architectures that exceed a target latency or energy budget. This forces the search toward Pareto-optimal designs that are both accurate and deployable, rather than producing large, accurate models that cannot run on the intended device.

Can NAS-discovered PPG architectures really outperform hand-designed ones?

Yes, on efficiency metrics. NAS-discovered architectures for heart rate estimation consistently achieve the same or better accuracy as hand-designed competitors while using two to four times fewer parameters and running two to three times faster on microcontrollers. The gains come from the search exploring connectivity patterns and kernel combinations that human designers do not typically consider.

How long does a PPG NAS search take?

Full evolutionary or reinforcement learning NAS can take hundreds to thousands of GPU-hours. Differentiable NAS (DARTS-style) reduces this to tens of GPU-hours by relaxing the discrete search to a continuous optimization. Once-for-all methods amortize the cost by training one large supernet and extracting many sub-networks without retraining. For most teams, starting from a published NAS result and fine-tuning is more practical than running a search from scratch.

What search space parameters matter most for PPG signals?

Kernel size and dilation rate matter most for capturing PPG's characteristic frequency content. Larger kernels or higher dilation capture the slow baseline; smaller kernels capture pulse waveform details. The presence or absence of skip connections between distant layers also matters significantly, as it allows the network to maintain both coarse and fine temporal representations simultaneously.

How does quantization interact with NAS for wearable PPG?

Quantization reduces weight and activation precision to 8-bit or 4-bit integers, cutting memory footprint and enabling faster integer arithmetic on fixed-point hardware. The best results come from quantization-aware NAS, where the search evaluates each candidate in its quantized form rather than in full floating-point precision. This prevents the search from selecting layers that are accurate in float but degrade badly when quantized.

Is NAS practical for small teams developing PPG wearables?

For most small teams, full NAS from scratch is not practical due to compute costs. The practical approach is to start from a published NAS-discovered architecture suited to PPG 1D signals, retrain on proprietary data, and combine with knowledge distillation or structured pruning for final size reduction. The architecture topology from NAS still provides a strong starting point that typically outperforms ad-hoc hand-designed alternatives.