Multimodal Transformers for PPG: Fusing Pulse, Motion, and ECG

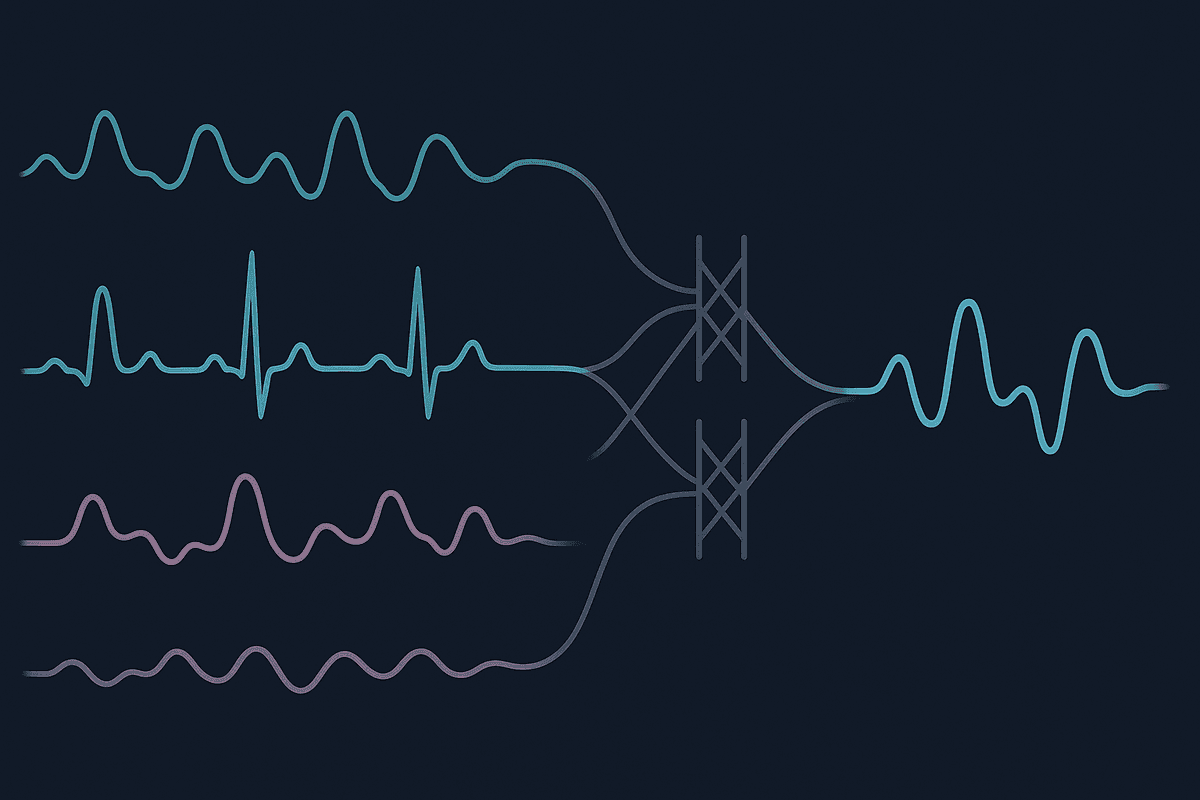

Multimodal transformers fuse photoplethysmography with accelerometer, ECG, and respiration inputs using cross-attention, improving motion robustness, blood pressure estimation, and arrhythmia screening beyond what any single sensor achieves alone.

Multimodal transformers improve PPG analysis by letting a model jointly attend to pulse waveforms, accelerometer motion, ECG timing, and respiration patterns, so it can separate true cardiovascular structure from noise, preserve context across time, and deliver better motion robustness, blood pressure estimation, and arrhythmia screening than a single-sensor PPG pipeline.

Photoplethysmography, or PPG, is attractive because it is cheap, comfortable, and already embedded in watches, rings, patches, and phones. But PPG also has a structural weakness: the optical waveform is highly sensitive to motion, contact pressure, local perfusion, skin-tone-related signal amplitude differences, ambient light leakage, and device placement. A model that sees only PPG has to infer whether a sudden shape change came from physiology or from artifact. Sometimes it can, but often it cannot with high confidence.

That is why multimodal transformers are becoming so important for wearable sensing. Instead of treating accelerometer, ECG, or respiration as optional side channels, a multimodal transformer treats them as complementary evidence streams. Each modality provides a different view of the same underlying cardiovascular state. PPG contributes pulse morphology and peripheral vascular timing. Accelerometers explain motion context. ECG anchors electrical cardiac timing. Respiration provides slower oscillations that modulate heart rate, venous return, and pulse amplitude. Cross-attention gives the model a way to decide which stream should inform which decision at each moment.

If you want a primer on transformer architectures in this space, start with our overview of PPG transformer models. If your main concern is artifact handling, our guide to PPG motion artifact removal is a useful companion. And if you are building production pipelines, PPG signal quality assessment should sit next to any fusion strategy, because multimodal models are strongest when the system knows which segments are trustworthy.

Why single-sensor PPG reaches a ceiling

Traditional PPG pipelines often depend on hand-crafted preprocessing, beat detection, feature extraction, and then a classifier or regressor. Deep learning pipelines improve flexibility, but when they use only one sensor they still inherit an ambiguity problem. Consider three examples:

- A user jogs uphill and the wrist moves sharply. PPG amplitude shifts, baseline wanders, and beat shapes deform. Without motion context, the model may misread artifact as irregular rhythm.

- A user has a short ectopic beat sequence. PPG reflects the hemodynamic consequence of the beat, but not the exact electrical onset. Without ECG, the timing picture is incomplete.

- A user changes breathing depth during sleep or relaxation. Respiration modulates pulse amplitude and interval variability. Without a respiration channel, those oscillations can look like nuisance variation rather than physiological structure.

In all three cases, the core issue is not model size. It is missing information. A smarter single-channel model still has to guess. A fusion model gets to compare evidence across sensors.

This matters because wearable health applications increasingly target tasks that are context-dependent rather than purely pattern-based. Blood pressure estimation depends on vascular tone, timing, and waveform morphology. Arrhythmia screening depends on distinguishing real irregularity from motion contamination. Stress, sleep, and recovery tracking depend on low-frequency autonomic dynamics. A model that sees multiple synchronized sensors has a better chance of learning the latent physiology behind the observations.

What a multimodal transformer actually does

A multimodal transformer usually starts with sensor-specific encoders. Raw or lightly processed PPG, accelerometer, ECG, and respiration signals are split into windows or tokens. Those tokens may represent fixed time patches, beats, learned embeddings, or frequency-time segments. Each modality is projected into a latent space, often with positional or temporal embeddings that preserve order.

From there, fusion can happen in several ways:

- Early fusion, where all tokens are mixed early and the model learns joint structure from the beginning.

- Late fusion, where each modality is encoded separately and only combined near the prediction head.

- Hybrid fusion, where modality-specific layers preserve local structure first, then cross-attention layers exchange information.

- Cross-attention fusion, where one modality queries another, allowing targeted information transfer instead of indiscriminate pooling.

Cross-attention is especially useful for physiological sensing because the modalities are not interchangeable. ECG often provides precise event timing, PPG provides peripheral waveform shape, accelerometer provides motion explanation, and respiration provides slower contextual modulation. Cross-attention lets the model ask focused questions such as:

- Which accelerometer segments explain this suspicious PPG distortion?

- Which ECG events line up with delayed or abnormal pulse arrivals?

- Which respiration phase best explains cyclic changes in pulse amplitude?

- Which modality should dominate the prediction when another one becomes noisy?

That selective reasoning is a major step forward from concatenating features and hoping a downstream network sorts things out.

Broader transformer literature, including foundational work on token-based representation learning and later multimodal attention strategies, supports this direction. In practice, sensor fusion works best when the architecture respects what each signal knows rather than flattening everything into one undifferentiated stream.

The role of accelerometer signals in fusion

Accelerometer channels are often the most underappreciated part of wearable cardiovascular modeling. Many teams treat them only as activity labels or artifact flags. In a multimodal transformer, they can do much more.

When a device moves, the accelerometer reveals not just that motion occurred, but how it occurred. The direction, intensity, periodicity, and onset pattern can tell the model whether a PPG disturbance is likely due to running cadence, arm swing, loose contact, posture change, or random impact. That matters because different motion regimes corrupt PPG in different ways.

Cross-attention helps the model map motion patterns to waveform consequences. For example, if a burst of tri-axial acceleration occurs exactly when the PPG waveform loses dicrotic structure and develops step-like baseline jumps, the model can down-weight those PPG tokens. If light periodic motion appears but the pulse morphology remains coherent across several beats, the model may keep trusting the PPG while using the accelerometer only as context.

This is more nuanced than hard artifact rejection. A useful wearable model should not throw away every motion segment. Real-world deployment means users walk, cook, commute, gesture, and exercise. The goal is to recover useful physiology during motion, not just detect when motion happened. That is one reason multimodal transformers are promising for continuous blood pressure estimation and ambulatory heart monitoring.

Why ECG adds more than redundancy

PPG and ECG are related, but they are not duplicates. ECG measures the heart's electrical activity. PPG measures the optical consequence of blood volume changes in peripheral tissue. The delay between the electrical event and the peripheral pulse carries physiological information about vascular stiffness, pulse transit, and beat-to-beat hemodynamics.

A multimodal transformer that fuses ECG and PPG can learn several valuable relationships:

- Beat alignment between electrical depolarization and pulse arrival

- Differences between nominally similar ECG beats that produce different peripheral pulse shapes

- Loss of reliable PPG morphology during poor perfusion or motion, while ECG remains readable

- Timing patterns useful for blood pressure estimation and arrhythmia screening

For arrhythmia tasks, ECG can anchor the true rhythm while PPG contributes a peripheral hemodynamic view. That is especially valuable because some rhythm disturbances produce subtle or inconsistent pulse manifestations. A fusion model can learn when the PPG is sufficient and when the ECG should dominate. In other settings, such as cuffless blood pressure estimation, the joint timing and morphology relationship between ECG and PPG often carries much richer information than either modality alone.

This does not mean every product needs ECG all the time. It means that when ECG is available, even intermittently through a patch, chest strap, or clinical calibration session, a transformer can use it as a privileged modality during training or deployment. That creates room for smarter semi-supervised and transfer learning strategies in wearable health systems.

Respiration is slow, but it is not secondary

Respiration is sometimes treated as background variation, yet it shapes cardiovascular signals continuously. Breathing changes intrathoracic pressure, venous return, autonomic balance, heart rate variability, and peripheral pulse amplitude. In PPG, these effects often appear as low-frequency modulation layered on top of beat-level structure.

A multimodal transformer can use respiration to distinguish nuisance drift from meaningful physiology. For example, slow oscillations in pulse amplitude during paced breathing should not be interpreted the same way as sudden amplitude collapse from contact loss. Cross-attention allows the respiration stream to inform the PPG stream about expected modulation phase and timescale.

This matters for sleep analysis, stress tracking, autonomic assessment, and nocturnal cardiorespiratory monitoring. It also matters for signal quality. Some segments that look unstable in isolation become interpretable when respiration is visible. Conversely, if respiration disappears or becomes implausible while PPG also degrades, the model can lower confidence more intelligently.

In future wearable stacks, respiration may come from chest bands, impedance signals, derived respiratory surrogates, microphones, or even separate radar systems. The important point is not the exact sensor. It is that the transformer has a mechanism to incorporate respiratory context without forcing rigid handcrafted coupling rules.

Cross-attention is the key fusion mechanism

The phrase "multimodal transformer" can sound broad, but the real design question is how information moves between modalities. Cross-attention is powerful because it permits asymmetric, task-specific exchange.

Imagine a PPG token at time t that contains an ambiguous waveform distortion. A self-attention block over PPG alone can compare that token with other PPG tokens. That helps, but it remains blind to cause. A cross-attention block lets that same token query accelerometer tokens near t, ECG tokens around the preceding R-peak, and respiration tokens spanning the local breathing phase. The model can then reinterpret the distortion in context:

- If accelerometer energy spikes at the same instant, the distortion may be motion-driven.

- If ECG timing remains stable and pulse arrival shifts modestly, the change may reflect vascular dynamics rather than ectopy.

- If the distortion follows a respiratory cycle, it may be physiologically expected.

- If none of the supporting modalities explain it, the model may flag low confidence or possible pathological change.

That is why cross-attention often outperforms naive feature fusion in wearables. Physiological signals are synchronized but not identical. The model needs structured interaction, not just bigger input tensors.

Another advantage is graceful degradation. In practice, some modalities will be missing, noisy, or asynchronous. A well-designed cross-attention system can mask absent modalities, learn confidence weighting, and avoid catastrophic failure when one sensor drops out. For consumer wearables, that robustness is essential.

High-value use cases for multimodal PPG transformers

1. Motion-robust heart rate and rhythm monitoring

This is the most immediate application. Wrist PPG is useful at rest but fragile during daily activity. By attending to accelerometer context, a multimodal transformer can preserve usable heart rate and rhythm information during movement instead of resorting to aggressive rejection. That makes continuous monitoring more realistic in the real world, not just in sedentary validation datasets.

2. Blood pressure estimation

Cuffless blood pressure estimation is a hard problem partly because peripheral pulse morphology alone is not enough. Fusion with ECG adds timing information related to pulse arrival and vascular dynamics. Respiration adds context about autonomic and intrathoracic influences. Cross-attention can learn when morphology matters most, when timing matters most, and how those factors shift across posture, stress, and activity.

3. Arrhythmia screening

PPG can detect irregularity, but screening accuracy depends heavily on signal quality and context. ECG provides a direct rhythm anchor, while accelerometers explain confounding motion. A multimodal transformer can therefore reduce false positives caused by artifact and improve confidence when the rhythm is truly abnormal. That is particularly relevant for atrial fibrillation screening and opportunistic wearable monitoring.

4. Signal quality aware inference

A strong fusion model should not only produce predictions, it should estimate whether those predictions deserve trust. Multimodal attention naturally supports this by comparing the agreement between sensors. If PPG is noisy but accelerometer clearly explains the corruption, the model can abstain or smooth its output. If ECG and PPG disagree in a clinically meaningful way, the system can escalate. This is where fusion and signal quality assessment start to merge.

5. Sleep and recovery analytics

During sleep, respiration and cardiovascular dynamics are tightly coupled. PPG plus respiration can help distinguish respiratory-induced variability, awakenings, movement, and potential apnea-related disturbances. If motion context is also available, the model can separate body repositioning from autonomic shifts. For recovery and readiness applications, that richer context improves interpretability and stability.

Design considerations that matter in practice

The architecture alone is not enough. Multimodal PPG systems live or die by implementation details.

Time synchronization is the first requirement. Cross-attention is only useful if modalities are aligned well enough for the model to compare related events. Even small timestamp drift can damage learned relationships.

Sampling rate mismatch is the next challenge. ECG, PPG, respiration, and accelerometer streams may arrive at different frequencies. Tokenization strategies must preserve useful temporal detail without creating an unmanageable sequence length.

Missing modality handling should be designed from the start. In real deployments, a respiration channel may be absent, an ECG patch may disconnect, or accelerometer axes may saturate. Training with masking and dropout across modalities helps the model remain useful under partial observation.

Label quality matters more than ever. If fusion models are trained on noisy blood pressure labels or poorly adjudicated arrhythmia events, the additional sensor richness may simply help them overfit label noise more effectively.

Interpretability is also important. Attention maps are not perfect explanations, but they can still show whether the model uses accelerometer spikes, ECG anchors, or respiratory phase in plausible ways. For clinical or regulated settings, that kind of sanity check is helpful.

Deployment cost must be considered. Multimodal transformers can be heavier than classical models. Distillation, sparse attention, patching, and modality-specific lightweight encoders may be needed for on-device inference. Fortunately, not every task requires a giant foundation model. Many wearable use cases can benefit from modest fusion architectures if the design is disciplined.

Where the field is heading

The larger trend is clear: wearable AI is moving from isolated signal interpretation toward context-aware physiological modeling. Transformer methods made it easier to represent long-range dependencies and heterogeneous inputs. Multimodal variants extend that strength to real sensor ecosystems.

For PPG, this is especially consequential because the modality is both valuable and vulnerable. Valuable, because it is inexpensive and ubiquitous. Vulnerable, because it is easily distorted. Multimodal transformers reduce that vulnerability by letting the model ask which other sensors can clarify the pulse signal right now.

The next wave will likely include better self-supervised pretraining on unlabeled wearable data, more flexible handling of missing sensors, and architectures that combine short beat-level detail with longer context windows. Some systems will use ECG only during calibration. Some will infer respiratory surrogates from PPG and motion jointly. Some will learn personalized fusion patterns that adapt to a specific wearer, device fit, and activity profile.

What should not be expected is magic. Fusion does not fix bad data collection, poor synchronization, or sloppy evaluation. But when the data pipeline is sound, multimodal transformers offer a practical path beyond the ceiling of single-sensor PPG analysis.

Practical takeaway

If you are building with PPG today, the key question is not whether to keep improving the optical model. You should. The bigger question is whether you are forcing PPG to solve problems alone that really require context. Motion, timing, and respiration are not distractions from cardiovascular modeling. They are part of it.

That is why multimodal transformers are so promising. They let PPG remain the central signal while acknowledging that robust cardiovascular inference emerges from relationships between signals, not from one waveform in isolation. For teams building next-generation wearables, that shift from isolated classification to structured fusion may be the difference between a lab demo and a dependable real-world system.

FAQ

What is a multimodal transformer in the context of PPG?

It is a transformer model that processes PPG alongside additional synchronized signals such as accelerometer, ECG, or respiration. Instead of relying on one waveform alone, it learns relationships across sensors to improve prediction accuracy and robustness.

Why is cross-attention useful for wearable sensor fusion?

Cross-attention lets one modality selectively query another. In practice, that means ambiguous PPG segments can look to accelerometer data for motion context, ECG for cardiac timing, or respiration for low-frequency modulation. This targeted exchange is often more effective than simply concatenating features.

Does adding more sensors always improve PPG model performance?

No. Extra sensors help only when they are synchronized, informative, and modeled well. Poor alignment, noisy labels, or missing data handling can negate the benefit. Good fusion design matters as much as sensor count.

How does ECG help blood pressure estimation with PPG?

ECG adds electrical cardiac timing, which can be compared with peripheral pulse arrival in PPG. That timing relationship, combined with pulse morphology, often carries more information about vascular dynamics than PPG alone.

Can multimodal transformers reduce motion artifacts instead of just rejecting them?

Yes. That is one of their main advantages. With accelerometer context, the model can learn which waveform changes are motion-induced and which still preserve usable physiological content, allowing partial recovery rather than blanket rejection.

Is respiration really necessary for PPG analysis?

Not for every task, but it is very useful when respiratory modulation affects the cardiovascular outcome, such as sleep analysis, autonomic assessment, stress tracking, and any setting where slow oscillations in pulse amplitude or interval matter.

Are multimodal transformers practical for consumer wearables?

Increasingly, yes. Full-size research models may be too heavy for edge deployment, but compact fusion architectures, distillation, and hybrid on-device plus cloud inference make them increasingly feasible.

References

- arXiv:2106.11959, relevant multimodal transformer reference for cross-modal fusion in sequence modeling. https://arxiv.org/abs/2106.11959

- Nature Digital Medicine wearable physiological deep learning study. https://doi.org/10.1038/s41746-019-0136-7

- Dosovitskiy et al., "An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale." https://arxiv.org/abs/2010.11929